What is “Zen3” (Ryzen 5000)?

AMD’s Zen3 (“Vermeer”) is the 3rd generation ZEN core – aka the new 5000-series of CPUs from AMD, that introduces further refinements of the ZEN(2) core and layout. An APU version (with integrated graphics) is also available. The CPU/APUs remain socket AM4 compatible on desktop – thus allowing in-place upgrade (subject to BIOS upgrade as always) – but series 500-chipsets are recommended to enable all features (e.g. PCIe4, etc.). [Note this is the last CPU that will fit AM4 socket; future CPUs supporting DDR5 need a new socket]

Unlike ZEN2, the main changes are to the core/cache layout but they could still prove significant considering the cache/memory latencies issues that have impacted ZEN designs:

- (AMD) Claims +19% IPC (instructions per clock) overall improvement vs. ZEN2

- Higher base and turbo clocks +7% [for 5800X vs. 3700X]

- Still built around “chiplets” CCX (“core complexes”) but now of 8C/16T and larger L3 cache (still 7nm)

- Same central I/O hub with memory controller(s) and PCIe 4.0 bridges connected through IF (“Infinity Fabric”) (12nm)

- Still up to 2 chiplets on desktop platform thus up to 2x 8C (16C/32T 5950X)

- L3 is still the same 32MB but now unified (not 2x 16MB) still up to 64MB on 5950X

- 3D V-Cache L3 is 96MB unified, thus 3x (!) larger than original Zen3

- 3D V-Cache L3 is 96MB unified, thus 3x (!) larger than original Zen3

- 20 PCIe 4.0 lanes

- 2x DDR4 memory controllers up to 3200Mt/s official (4266Mt/s max) [future AM5 socket for DDR5 support]

What is the new Zen3-3D V-Cache (Ryzen 5000-3D)?

It is a version of Zen3+ chiplet with vertically stacked (thus the 3D(imensions) moniker) L3 cache that is 3x larger (thus 96MB). The latency is expected to be slightly higher (+4 clock) and bandwidth also slightly lower (~10% less).

However, the sheer size of the L3 cache allows many (desktop) workloads’ data sets to be fulfilled directly from the L3 cache thus avoiding main memory access (with higher latencies and lower bandwidth). Inter-core/thread transfers of relatively large data sets (12MB/core) can also be fulfilled directly by the L3 cache.

Until recently, top-end 8-core Intel CPUs (e.g. 11900K, 10700K, etc.) had only 16MB L3 cache (1/2x normal Ryzen, 1/6x 3D Ryzen) – with only recent Intel “AlderLake” (ADL) 16-core (8C+8c) having a comparable 30MB L3 cache.

To upgrade from standard Zen3 or not?

Except the new L3 3D/V-Cache cache, there are no other major changes:

- Minor stepping update (S2 vs. S0) with no major fixes

- Requires AGESA V2 1.2.0.6+ for support – update BIOS before installing

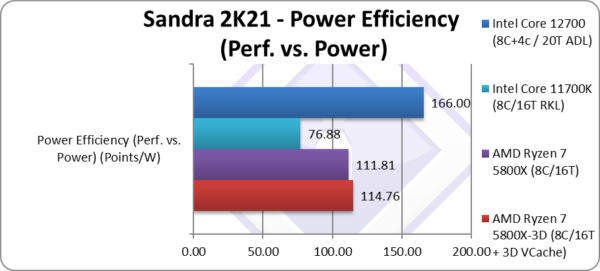

- Base and Turbo clocks are lower than normal Zen3 (5800X), thus raw compute power is lower

It all depends on the data set(s) of the workload(s) you are running:

- Data sets that either entirely fit or can be significantly served in the 96MB L3 cache – will see significant uplift

- Inter-core/thread data transfers that can entirely fit in the 3D L3 cache – will see significant uplift

- Streaming workloads or with very large data sets may not show uplift but be slower due to lower base/turbo clocks

- Compute heavy algorithms with small data sets will be slower due to lower base/turbo clocks

Review

In this article we test CPU core performance; please see our other articles on:

- AMD Ryzen 7 5800X (Zen3) Review & Benchmarks – CPU 8-core/16-thread Performance

- AMD Ryzen 9 5950X (Zen3) Review & Benchmarks – CPU 16-core/32-thread Performance

- AMD Ryzen 5 5600X (Zen3) Review & Benchmarks – CPU 6-core/12-thread Performance

Hardware Specifications

We are comparing the top-range Ryzen 7 5000-series (Zen3 8-core) with previous generation Ryzen 7 3000-series (Zen2 8-core) and competing architectures with a view to upgrading to a top-range, high performance design.

| CPU Specifications | AMD Ryzen 7 5800X-3D 8C/16T (Vermeer-3D) |

AMD Ryzen 7 5800X 8C/16T (Vermeer) | Intel Core i7 11700K 8C/16T (RocketLake) | Intel Core i7 12700 8C+4c / 20T (AlderLake) | Comments | |

| Cores (CU) / Threads (SP) | 8C / 16T | 8C / 16T | 8C / 16T | 8C + 4c / 20T | Core counts remain the same. | |

| Topology | 1 chiplet, 1 CCX, each 8 core (8C) + I/O hub | 1 chiplet, 1 CCX, each 8 core (8C) + I/O hub | Monolithic die | Monolithic die | Same topology | |

| Speed (Min / Max / Turbo) (GHz) |

3.4 / 4.5GHz | 3.8 / 4.7GHz | 3.6 / 5GHz | 2.1+1.6 / 4.8+3.6 | Both base and turbo are down | |

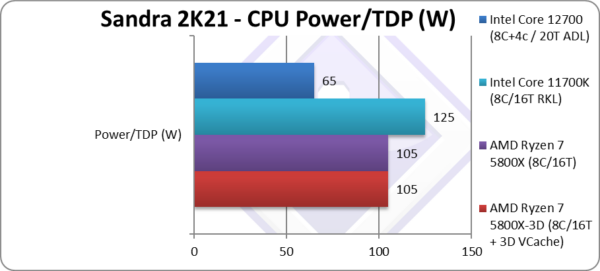

| Power (TDP / Turbo) (W) |

105 / 135W (PL2) | 105 / 135W (PL2) | 125 / 175W (PL2) | 65 / 180W (PL2) | Same TDP | |

| L1D / L1I Caches (kB) |

8x 32kB 8-way / 8x 32kB 8-way | 8x 32kB 8-way / 8x 32kB 8-way | 8x 32kB 8-way / 8x 32kB 8-way | 8x 32k+4x 48kB / 8x 48kB + 4x 32kB | No changes to L1 | |

| L2 Caches (MB) |

8x 512kB (4MB) 8-way inclusive | 8x 512kB (4MB) 8-way inclusive | 8x 512kB (4MB) | 8x 1.25MB + 2MB | No changes to L2 | |

| L3 Caches (MB) |

96MB 16-way exclusive [+3x] |

32MB 16-way exclusive | 16MB 16-way | 25MB 11-way | 3x larger L3 | |

| Mitigations for Vulnerabilities | BTI/”Spectre”, SSB/”Spectre v4″ hardware | BTI/”Spectre”, SSB/”Spectre v4″ hardware | BTI/”Spectre”, SSB/”Spectre v4″ software/firmware | BTI/”Spectre”, SSB/”Spectre v4″ software/firmware | No new fixes required… yet! | |

| Microcode (MU) |

A20F12-05 | A20F10-16 | 0A0671-50 | 090672-15 | The latest microcodes have been loaded. | |

| SIMD Units | 256-bit AVX/FMA3/AVX2 | 256-bit AVX/FMA3/AVX2 | 512-bit AVX512 | 256-bit AVX/FMA3/AVX2 | Same SIMD widths | |

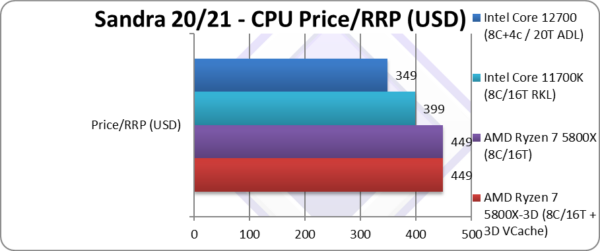

| Price/RRP (USD) |

$449 |

$449 | $399 | $349 | Same price as normal version | |

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. AMD, etc.). All trademarks acknowledged and used for identification only under fair use.

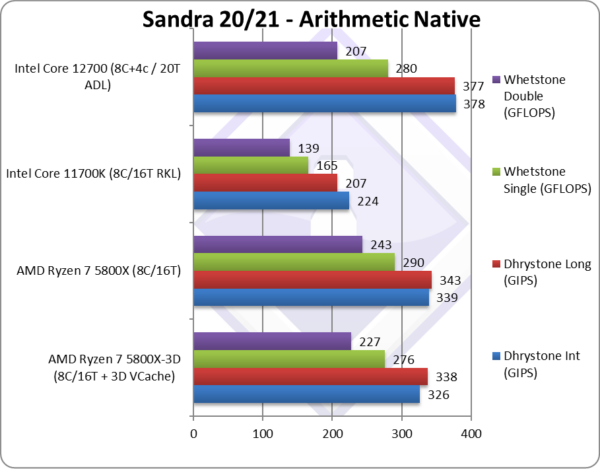

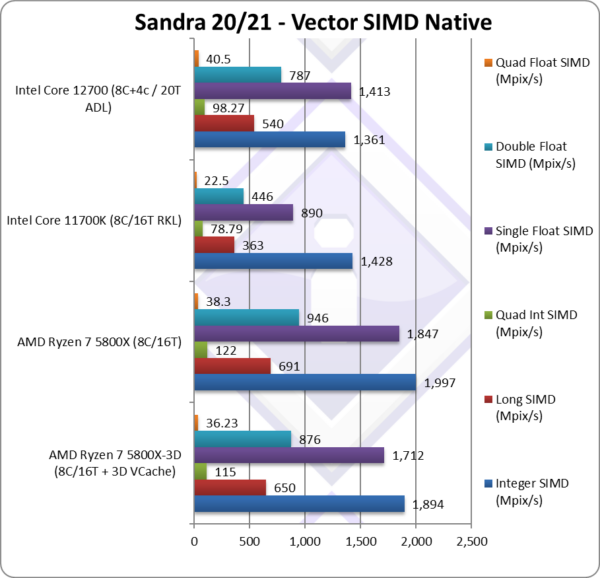

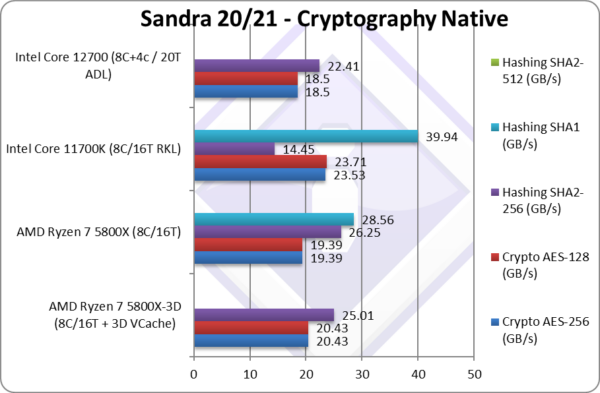

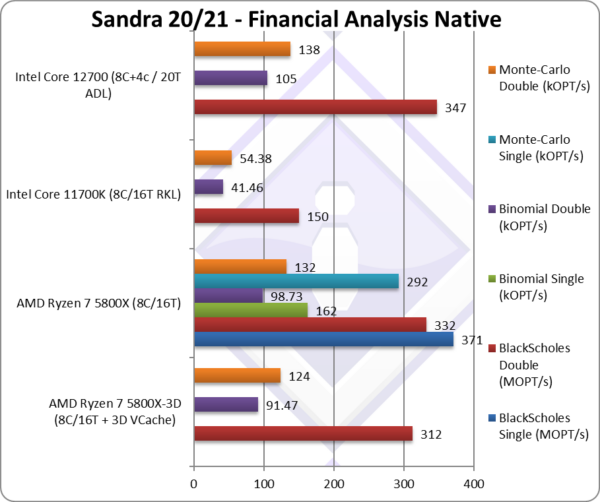

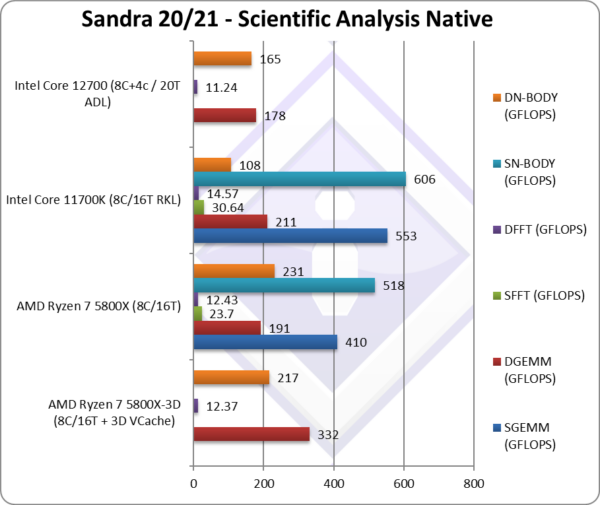

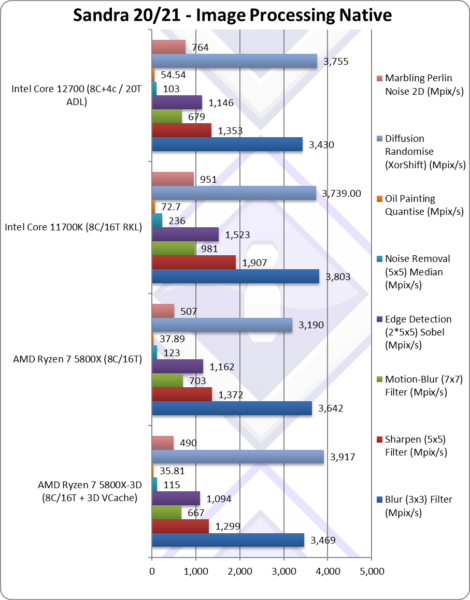

Native Performance

We are testing native arithmetic, SIMD and cryptography performance using the highest performing instruction sets (AVX2, FMA3, AVX, etc.). Zen3 supports all modern instruction sets including AVX2, FMA3 and even more like SHA HWA but not AVX-512.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 10 x64, latest AMD and Intel drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations. All mitigations for vulnerabilities (Meltdown, Spectre, L1TF, MDS, etc.) were enabled as per Windows default where applicable.

SiSoftware Official Ranker Scores

- AMD Ryzen 7 5800X-3D 8-Core

- AMD Ryzen 9 5950X 16-Core/32-Thread

- AMD Ryzen 7 5800X 8-Core/16-Thread

- AMD Ryzen 5 5600X 6-Core/12-Thread

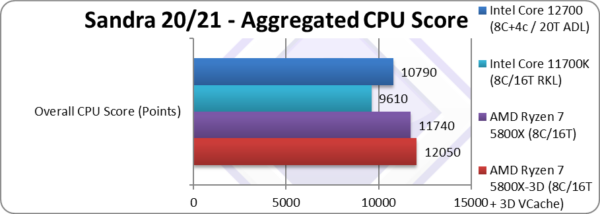

Final Thoughts / Conclusions

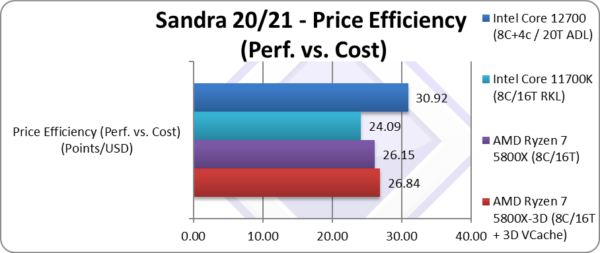

Summary: Recommended if moving to Zen3 from older versions of Ryzen (8/10).

Perhaps the biggest issue with the 3D V-Cache Zen3 is that the original (standard) Zen3 is too good – that the enormous 3D cache does not make more of a difference. The original Zen3’s L3 cache (32MB) is already large (compared to all but most recent CPUs especially Intel competition), provides good bandwidth, it has reasonably low latencies – and is already unified!

As 3D Zen3 has lower base/turbo clocks, it is already at a bit of disadvantage over original Zen3 – and in raw compute workloads it is naturally ~5% slower. In workloads with small data sets that already fit in the original L3 cache (32MB) – the higher latency and slightly lower bandwidth of the 3D L3 cache – makes it slightly slower than original Zen3 yet again.

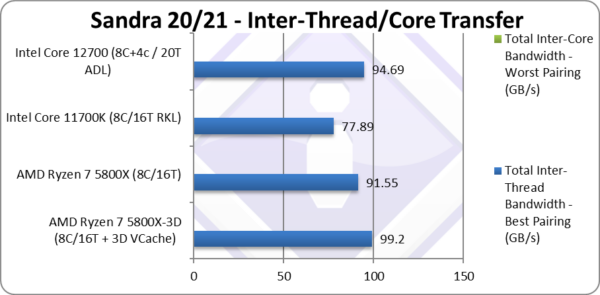

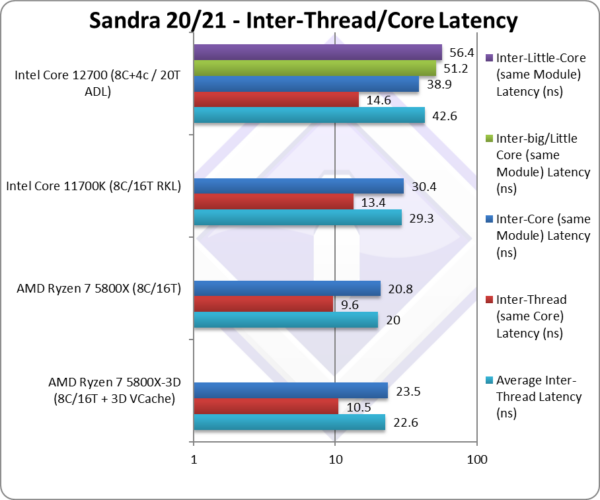

We do see good gains in inter-thread transfer bandwidth (when larger blocks are transferred between threads) of about +9% overall and overall cache & memory bandwidth is overall +20% higher (when larger blocks are read/written) which can improve some algorithms (e.g. GEMM) by over 70%. But it all depends on the dataset size.

If you work with datasets comparable to the new 3D L3 cache size – you will thus see a big uplift in performance. Otherwise, you may well small decrease in performance. Thus it is a very niche product – but at the same price point & TDP – it is one we’d choose over the original if moving to Zen3 from older versions. In effect, it is the “top-end” 8-core AM4 socket Ryzen!

But for “top-end” AMD4 socket performance – there are higher core Zen3 versions, all the way up to the 16-core 5950X – which may also be “upgraded” to 3D V-Cache at some point – that also have larger (2x 32MB aka 64MB) total L3 cache. With more cores/threads, the 3D Zen3 cannot be expected to match/beat them just with a L3 cache upgrade.

Please see the other reviews on different Ryzen variants:

- AMD Ryzen 7 5800X (Zen3) Review & Benchmarks – CPU 8-core/16-thread Performance

- AMD Ryzen 9 5950X (Zen3) Review & Benchmarks – CPU 16-core/32-thread Performance

- AMD Ryzen 5 5600X (Zen3) Review & Benchmarks – CPU 6-core/12-thread Performance

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. AMD, etc.). All trademarks acknowledged and used for identification only under fair use.