What is “Surface Pro X”?

It is Microsoft’s Arm64 range of Surface tablets – rather than the standard Intel x86 Surface Pro range (e.g. 3, 6, 8, etc.). After the disastrous Windows RT ARM tablets, Microsoft is trying again – this time with full 64-bit Arm64 Windows 10/11, good memory and storage options plus 4G/LTE connectivity. It looks good on paper – what is the reality?

Microsoft is also using their “own” SoC called “SQ” – a rebranded Qualcomm 8cx (4 big cores + 4 LITTLE cores) SoC as found in other competing tablets (Samsung, Lenovo, etc.). The number represents the 8cx generation (SQ1 – Gen 1, SQ2 – Gen 2) with the latter bringing faster clocks, updated graphics and generally having double memory (16GB LP-DDR4X vs. 8GB).

What is “Windows Arm64”?

It is the 64-bit version of client Windows 10/11 for Arm64 (AArch64) devices – analogous to the current x64 Windows 10/11 for Intel & AMD CPUs. While “desktop” Windows 8.x has been available for ARM (AArch32) as Windows RT – it did not allow running of non-Microsoft native Win32 applications (like Sandra) and also did not support emulation for current x86/x64 applications.

We should also recall that Windows Phone (though now dead) has always run on ARM devices (and other architectures) and was first to unify the Windows desktop and Windows CE kernels. Windows was first ported to 64-bit with the long-dead Alpha64 (Windows NT 4), then Itanium IA64 (Windows XP 64), x64 (AMD64/EM64T) and now Arm64 – which shows the versatility of the NT micro-kernel.

By contrast, Windows 10/11 Arm64 is able to run native AArch64 applications (compiled for Arm64 like Sandra) as well as emulating 32-bit x86 and ARM (AArch32) applications through “WoW” (Windows on Windows emulation). But *not* x64 applications! Ouch!

While Arm64 Windows 10/11 includes in-box drivers for many peripherals/devices – it may not have a driver for very new peripheral/device; some manufacturers may not (even) provide any support for Arm64. For some devices, standard in-box “class” drivers (e.g. NVMe controller/SSD, AHCI controller/SSD, USB controller, keyboard, mouse, etc.) do work but otherwise a custom driver is required. [Note there has never been any emulation for kernel drivers, in any version of Windows]

Microsoft SQ1, SQ2 SoC Details

- Qualcomm Snapdragon 8cx derived

- Gen 1 for SQ1, Gen 2 for SQ2

- ARMv8.2-A (Arm64) 64-bit cores

- 4x Kryo 495 Gold “big-cores” (based on Cortex-A76) @ 3.0-3.15GHz

- Arm64 v8.2 4-wide OoO design

- 64kB + 64kB L1, 128-512kB L2 per core

- ~25-33% performance increase over Cortex A75

- Cortex A75 provided ~20% performance increase over A73

- Cortex A73 provided ~30% performance increase over A72 (e.g. Raspberry Pi 4B cores)

- 4x Kryo 495 Silver “little-cores” (based on Cortex-A55) @ 1.8-2.4GHz

- Arm64 v8.2 2-wide in-order

- 32kB + 32kB L1, 64kB-256kB L2 per core

- ~15% power efficiency, ~18% performance increase over Cortex A53 (e.g. Raspberry Pi 3B(+) cores)

- 7nm process (TSMC)

- TDP ~5W

- Unified 4MB L3 / LLC cache

- System Cache 3MB (also used by GP-GPU)

- Memory LP-DDR4X 2x 2133 (4266) 8x 16-bit ~68GB/s

- Qualcomm Adreno 685, 690 GP-GPU

- 3CU 768SP 661MHz core clock

- DirectX 12, 11 support

- No OpenCL support in the driver. Boo!

Compared to Intel SoCs, we have more “real” cores (8) but the same number of threads (8T) as ARM has not implemented SMT (Hyperthreading) as Intel and AMD have. Otherwise, we have a pretty modern process (7nm), good clocks (in excess on 3GHz for big cores), decent L3/LLC cache (4MB) and high-bandwidth LP-DDR4X memory (8 channels of 16-bit).

ARM Advanced Instructions Support

SIMD: As in the x86 world, ARM supports SIMD instructions called “NEON” operating on 128-bit width registers – equivalent to SSE2. However, there are 32 of them – while x64 SSE/AVX only provide 16 – until AVX512 which also provides 32. In most algorithms we can use them in batches of 4 – effectively making them 512-bit!

Unlike newer cores, the older A7X cores do not support SVE (“Scalable Vector eXtensions” – the successor of NEON); current designs are still just 128-bit width although they do provide more flexibility especially when implementing complex algorithms.

Crypto: Similar to x86, ARM does provide hardware-accelerated (HWA) encryption/decryption (AES, SM3) as well as hashing (SHA1, SHA2, SHA3, SM4). Unlike, say, the Raspberry Pi SoCs, Qualcomm naturally includes crypto extensions. Yay!

Virtualisation: The ARM cores do have hardware virtualisation – and here Qualcomm has the necessary firmware functionality to enable Hyper-V on Windows 11 to run corresponding ARM/Arm64 virtual machines. Or perhaps emulate x86 through QEMU? Both VmWare and Proxmox include support should you wish to use different virtualisation technology. Yay!

Security Extensions: ARM cores since ARMv6 (!) have included TrustZone secure virtualisation. AMD’s own recent CPUs all contain an ARM core (Cortex A5?) supporting TrustZone handling the security functionality (e.g. PSP / firmware-emulated TPM). See our article

Crypto-processor (TPM) Benchmarking: Discrete vs. internal AMD, Intel, Microsoft HV.

TPM: in order to support Windows 11, Surface X does include TPM support. Thus you can use BitLocker disk encryption as well as other security-based virtualisation features (like Core Isolation / Memory Integrity). Yay!

Changes in Sandra to support ARM

As a relatively old piece of software (launched all the way back in 1997, yep we’re really old), Sandra contains a good amount of legacy but optimised code, with the benchmarks generally written in assembler (MASM, NASM and previously YASM) for x86/x64 using various SIMD instruction sets: SSE2, SSSE3, SSE4.1/4.2, AVX/FMA3, AVX2 and finally AVX512. All this had to be translated in more generic C/C++ code using templated instrinsics implementation for both x86/x64 and ARM/Arm64.

As a result, some of the historic benchmarks in Sandra have substantially changed – with the scores changing to some extent. This cannot be helped as it ensures benchmarking fairness between x86/x64 and ARM/Arm64 going forward.

For this reason we recommend using the very latest version of Sandra and keep up with updated versions that likely fix bugs, improve performance and stability.

CPU core performance Benchmarking

In this article we test CPU core performance; please see our other articles on:

- Windows Arm64 WoW – x86 Emulation Performance

- Windows Arm64 on Qualcomm Snapdragon 7c Performance

- Raspberry Pi 4B Review: Windows Arm64 on Broadcom BCM2711

- Raspberry Pi 3B+ Review: Windows Arm64 on Broadcom BCM2837

- Crypto-processor (TPM) Benchmarking: Discrete vs. internal AMD, Intel, Microsoft HV

Hardware Specifications

We are comparing the Arm64 processors with x86/x64 processors of similar vintage – all running current Windows 10/11, latest drivers.

| Specifications | Microsoft SQ2 | Raspberry Pi 4B | Intel Core i5-6300 | Intel Core i5-8265 | Comments | |

| Arch(itecture) | Kryo 495 Gold (Cortex A76) + Kryo 495 Silver (Cortex A55) 7nm | Cortex A72 16nm | Skylake-ULV (Gen6) | WhiskeyLake-ULV (Gen8) | big+LITLE cores vs. SMT | |

| Launch Date |

Q3 2020 | 2019 | Q3 2015 | Q3 2018 | Much newer design | |

| Cores (CU) / Threads (SP) | 4C + 4c (8T) | 4C / 4T | 2C / 4T | 4C / 8T | Same number of threads | |

| Rated Speed (GHz) | 1.8 | 1.5 | 2.5 | 1.5 | Similar base clock | |

| All/Single Turbo Speed (GHz) |

3.14 | 2.0 | 3.0 | 3.9 | Turbo could be higher | |

| Rated/Turbo Power (W) |

5-7 | 4-6 | 15-25 | 15-25 | Much lower TDP, 1/3x Intel | |

| L1D / L1I Caches | 4x 64kB | 4x 64kB | 4x 32kB 2-way | 4x 48kB 3-way | 2x 32kB | 2x 32kB | 4x 32kB | 4x 32kB | Similar L1 caches | |

| L2 Caches | 4x 512kB | 4x 128kB | 1MB 16-way | 2x 256kB | 4x 256kB | L2 is 2x on big cores | |

| L3 Cache(s) | 4MB | n/a | 3MB | 4MB | Same size L3 | |

| Special Instruction Sets |

v8.2-A, VFP4, AES, SHA, TZ, Neon | v8-A, VFP4, TZ, Neon | AVX2/FMA, AES, VT-d/x | AVX2/FMA, AES, VT-d/x | All 8.2 instructions | |

| SIMD Width / Units |

128-bit | 128-bit | 256-bit | 256-bit | Neon is not wide enough | |

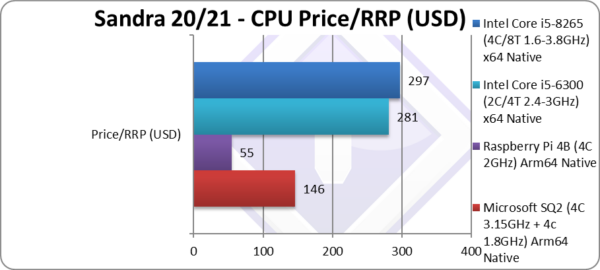

| Price / RRP (USD) |

$146 | $55 (whole SBC) | $281 | $297 | Pi price is for whole BMC! (including memory) | |

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. Microsoft, etc.). All trademarks acknowledged and used for identification only under fair use.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!

Native Performance

We are testing native arithmetic, SIMD and cryptography performance using the highest performing instruction sets, on both x64 and Arm64 platforms.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 10 x64/Arm64, latest drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations where supported.

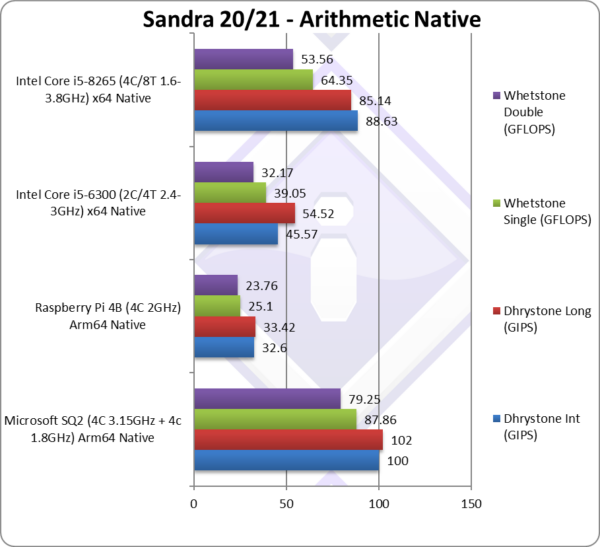

| Native Benchmarks | Microsoft SQ2 (4C 3.15GHz + 4c 1.8GHz) Arm64 Native | Raspberry Pi 4B (4C 2GHz) Arm64 Native | Intel Core i5 6300 (2C/4T 2.4-3GHz) x64 Native | Intel Core i5 8265 (4C/8T 1.6-3.8GHz) x64 Native | Comments | |

|

||||||

|

Native Dhrystone Integer (GIPS) | 100 [+12%] | 32.6 | 45.57** | 88.63** | SQ is 12% faster than a i5 ULV! |

|

Native Dhrystone Long (GIPS) | 102 [+19%] | 33.42 | 54.52** | 85.14** | A 64-bit integer workload is 19% faster! |

|

Native FP32 (Float) Whetstone (GFLOPS) | 87.86 [36%] | 25.1 | 39.05* | 64.35* | With floating-point, SQ is 35% faster! |

|

Native FP64 (Double) Whetstone (GFLOPS) | 79 [+47%] | 23.76 | 32.17* | 53.56* | With FP64 SQ is almost 50% faster |

| In these standard legacy tests, SQ does extremely well – with the 8 cores decimating the equivalent WHL Core i5 by 20% on integer (despite scalar AVX2) and as much as 50% on floating-point (despite scalar SSE3!).

It is a great showing for Microsoft/Qualcomm and Arm64 – this means native “standard” applications (non-vectorised workload) perform even better on ARM despite the 1/3 power(!) Perhaps this is why Apple has moved to their own ARM-based SoC (M1) and ditched Intel. For mobile (phone, laptop, tablet) ARM seems unbeatable. Note*: using SSE2-3 SIMD processing. Note**: using AVX2 processing. |

||||||

|

||||||

|

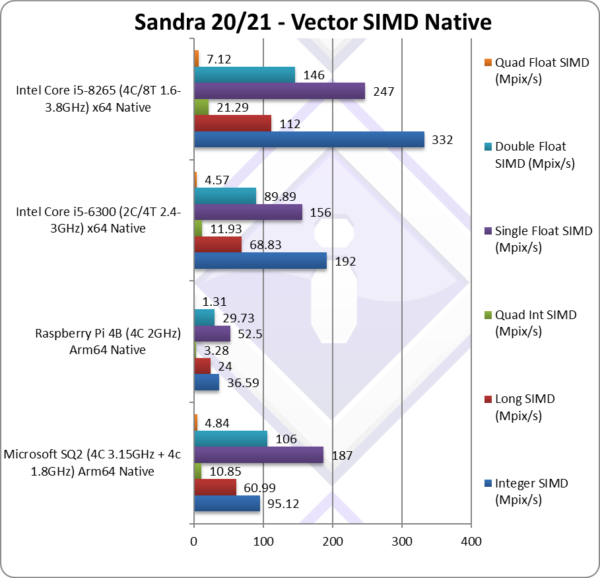

Native Integer (Int32) Multi-Media (Mpix/s) | 95.12** [1/3x] | 36.6** | 192* | 332* | SQ is 1/3x WHL with AVX2. |

|

Native Long (Int64) Multi-Media (Mpix/s) | 60.99** [1/2x] | 24** | 68.83* | 112* | With a 64-bit, SQ is only 1/2x WHL. |

|

Native Quad-Int (Int128) Multi-Media (Mpix/s) | 10.85** [1/2x] | 3.28** | 11.93* | 21.29* | Using 64-bit int to emulate Int128, SQ is 1/2x WHL. |

|

Native Float/FP32 Multi-Media (Mpix/s) | 187** [-25%] | 52.5** | 156* | 247* | In this FP32 vectorised SQ is only 25% slower! |

|

Native Double/FP64 Multi-Media (Mpix/s) | 106** [-28%] | 29.73** | 89.89* | 146* | Switching to FP64 SQ is 28% slower. |

|

Native Quad-Float/FP128 Multi-Media (Mpix/s) | 4.84** [-33%] | 1.31** | 4.57* | 7.12* | Using FP64 to mantissa extend FP128 SQ is 33% slower. |

| With heavily vectorised SIMD workloads – SQ with just 128-bit wide Neon cannot match the power of AVX2 256-bit Core performance, but is generally 1/2x slower on integer workloads and only 25-33% slower on floating-point. Let’s also note that Sandra itself is only recently been ported to Arm64 and thus not as well optimised as over 20-years (!) in x86 land.

With SVE bringing much wider SIMD processing, we expect to see Arm64 designs overtake Intel in SIMD processing also – especially since they have killed AVX512 on mobile/desktop (with “AlderLake”/”RaptorLake”). Note*: using AVX2/FMA3 256-bit SIMD processing. Note**: using NEON 128-bit SIMD processing. |

||||||

|

||||||

|

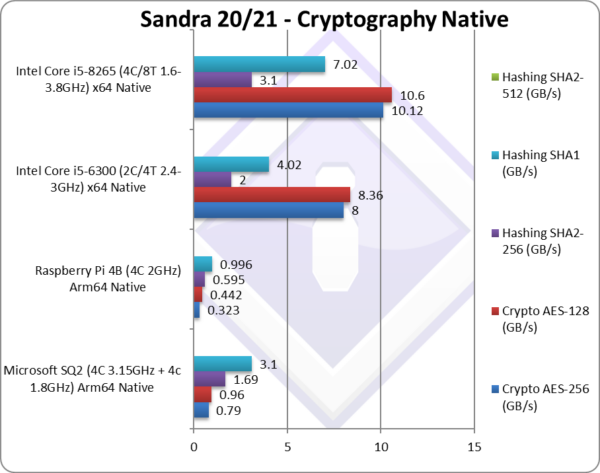

Crypto AES-256 (GB/s) | 0.79 | 0.323 | 8* | 10.12* | We are working on adding AES acceleration. |

|

Crypto AES-128 (GB/s) | 0.96 | 0.442 | 8.36* | 10.6* | No change with AES128. |

|

Crypto SHA2-256 (GB/s) | 1.69** [1/2x] | 0.595** | 2*** | 3.1*** | We need SHA acceleration here |

|

Crypto SHA1 (GB/s) | 3.1** [-66%] | 0.996** | 4.02*** | 7.02*** | Less compute intensive SHA1. |

|

Crypto SHA2-512 (GB/s) | SHA2-512 is not accelerated by SHA HWA. | ||||

| SQ naturally provides AES HWA (hardware acceleration) which we have not yet enabled in Sandra. Thus the relatively large performance delta vs. AES-enabled WHL Intel is thus expected and will be corrected.

SQ also provides SHA HWA (hardware acceleration) – unlike WHL Intel – but this is again not yet enabled in Sandra. However, multi-buffer NEON (128-bit wide) does quite well against AVX2 (256-bit wide) – likely due to the relatively high LP-DDR4X bandwidth. Note*: using AES HWA (hardware acceleration). Note**: using NEON multi-buffer hashing. Note***: using AVX2 multi-buffer hashing. |

||||||

|

||||||

|

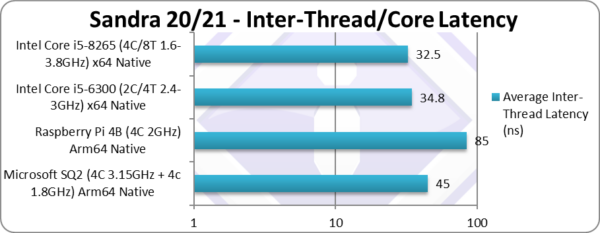

Inter-Module (CCX) Latency (Same Package) (ns) | 45 | 85 | 42.3 | 35.1 | Similar inter-core latency to Intel. |

| With big and LITTLE core clusters, the inter-core latencies vary greatly between big-2-big, LITTLE-2-LITTLE and big-2-LITTLE cores. Here we present the inter big-cores latencies that are comparable with Intel’s designs. Judicious thread scheduling is needed so that data transfers are efficient. | ||||||

|

||||||

|

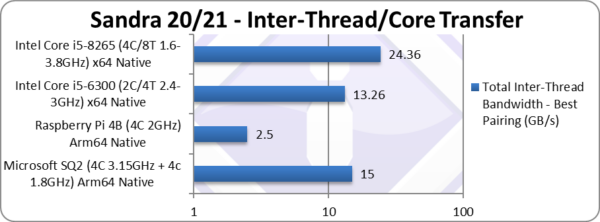

Total Inter-Thread Bandwidth – Best Pairing (GB/s) | 15** | 2.5** | 13.26* | 24.36* | Similar overall bandwidth to Intel. |

| As with latencies, inter-core bandwidths vary greatly between big-2-big, LITTLE-2-LITTLE and big-2-LITTLE cores, here we present the bandwidth between the big cores. Judicious thread scheduling is needed so that data transfers are efficient.

Note:* using AVX2 256-bit wide transfers. Note**: using NEON 128-bit wide transfers. |

||||||

|

||||||

|

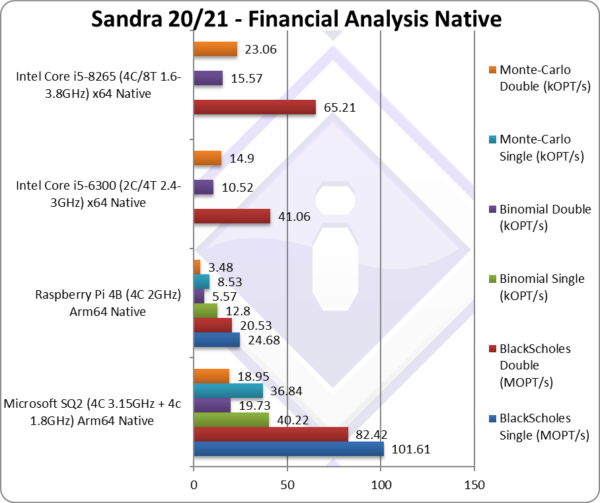

Black-Scholes float/FP32 (MOPT/s) | 101.61 | 24.68 | Black-scholes is un-vectorised and compute heavy. | ||

|

Black-Scholes double/FP64 (MOPT/s) | 82.42 [+26%] | 20.53 | 41.06 | 65.21 | Using FP64, SQ is 26% faster. |

|

Binomial float/FP32 (kOPT/s) | 40.22 | 12.8 | Binomial uses thread shared data thus stresses the cache & memory system. | ||

|

Binomial double/FP64 (kOPT/s) | 19.73 [+26%] | 5.47 | 10.52 | 15.57 | With FP64, SQ is still 26% faster. |

|

Monte-Carlo float/FP32 (kOPT/s) | 36.84 | 8.53 | Monte-Carlo also uses thread shared data but read-only thus reducing modify pressure on the caches. | ||

|

Monte-Carlo double/FP64 (kOPT/s) | 18.95 [-18%] | 3.48 | 14.9 | 23.06 | Switching to FP64, SQ is only 18% slower. |

| With non-SIMD financial workloads, similar to what we’ve seen in legacy code (Dhrystone, Whetstone), SQ performs a lot better – it is generally 25% faster than Intel competition. This means normal (native) applications perform exceptionally well. | ||||||

|

||||||

|

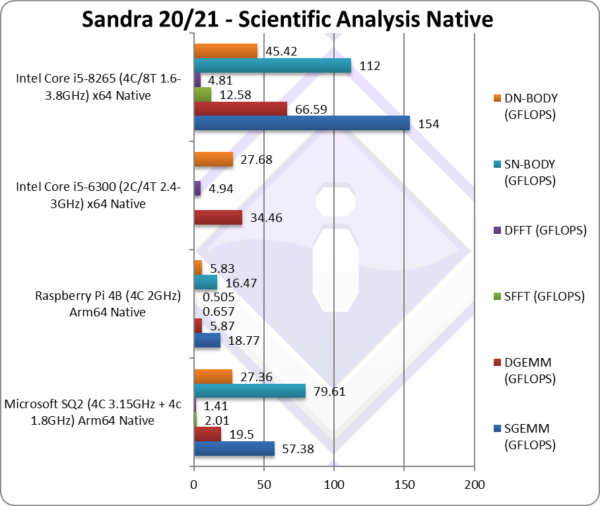

SGEMM (GFLOPS) float/FP32 | 57.38* | 18.77* | In this tough vectorised algorithm SQ does well | ||

|

DGEMM (GFLOPS) double/FP64 | 19.5* [1/3x] | 5.87* | 34.46** | 66.59** | With FP64 vectorised code, SQ is 1/3x WHL. |

|

SFFT (GFLOPS) float/FP32 | 2.01* | 0.657* | FFT is also heavily vectorised but memory dependent. | ||

|

DFFT (GFLOPS) double/FP64 | 1.41* [1/3x] | 0.505* | 4.94** | 4.81** | With FP64 code, SQ is 1/3x slower. |

|

SN-BODY (GFLOPS) float/FP32 | 79.61* | 16.47 | N-Body simulation is vectorised but with more memory accesses. | ||

|

DN-BODY (GFLOPS) double/FP64 | 27.36* [-40%] | 5.83 | 27.68** | 45.42** | With FP64 SQ is finally 40% slower. |

| With highly vectorised SIMD code (scientific workloads), it is clear that a lot of work is needed to optimise code for ARM to get it to match x86/x64 in performance. In some algorithms (GEMM, N-BODY) it is doing well – but overall more optimisations need to be made.

Note*: using AVX2/FMA3 256-bi5 SIMD. Note**: using NEON 128-bit SIMD. |

||||||

|

||||||

|

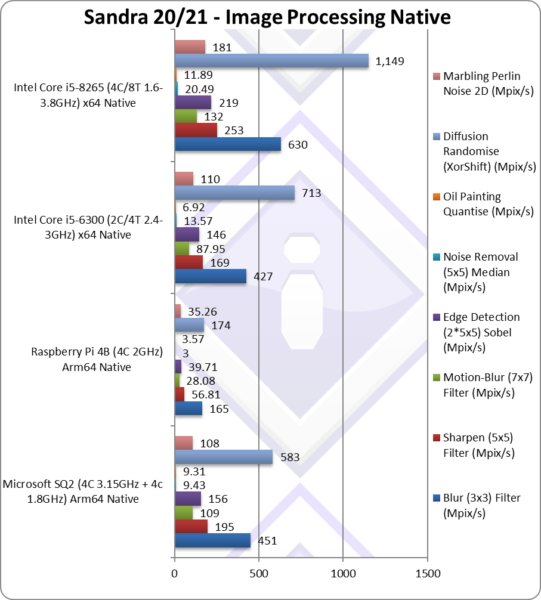

Blur (3×3) Filter (MPix/s) | 451** [-39%] | 165** | 427* | 630* | In this vectorised integer workload SQ is 39% slower. |

|

Sharpen (5×5) Filter (MPix/s) | 195** [-23%] | 56.81** | 169* | 253* | Same algorithm but more shared data SQ is 23% slower. |

|

Motion-Blur (7×7) Filter (MPix/s) | 109** [-18%] | 28.08** | 87.95* | 132* | Again same algorithm but even more data, SQ is 18% slower. |

|

Edge Detection (2*5×5) Sobel Filter (MPix/s) | 156** [-29%] | 39.71** | 146* | 219* | Different algorithm but still vectorised workload SQ is 29% slower. |

|

Noise Removal (5×5) Median Filter (MPix/s) | 9.43** [-54%] | 3** | 13.57* | 20.49* | Still vectorised code SQ is 1/2x slower. |

|

Oil Painting Quantise Filter (MPix/s) | 9.31** [-22%] | 3.57** | 6.92* | 11.89* | In this tough filter, SQ is 22 slower. |

|

Diffusion Randomise (XorShift) Filter (MPix/s) | 583** [1/2x] | 174** | 713* | 1,149* | With 64-bit integer workload, SQ is 1/2x slower. |

|

Marbling Perlin Noise 2D Filter (MPix/s) | 108** [-40%] | 35.26** | 110* | 181* | In this final test (scatter/gather) SQ is 40% slower. |

| We know these benchmarks *love* SIMD, with AVX2/AVX512 always performing strongly – thus Intel with 256-bit wide AVX2 has the advantage against SQ’s 128-bit NEON. In general the difference is not as high as 50% but rather 20-40% which is a good result.

Again, as with other compute-heavy algorithms – these days such algorithms would be offloaded to the Cloud or locally to the GP-GPU – thus the CPU does not need to be a SIMD speed demon. Note*: using AVX2 256-bit SIMD. Note**: using NEON 128-bit SIMD. |

||||||

|

||||||

|

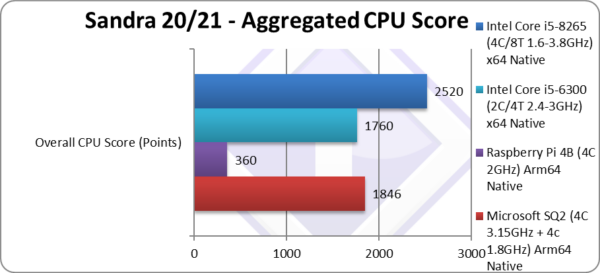

Aggregate Score (Points) | 1,846 [-27%] | 360 | 1,760 | 2,520 | Across all benchmarks, SQ is 27% slower. |

| Overall, SQ is just 27% slower than Intel’s WHL – with the SIMD performance bringing the overall score down. However, let’s recall that non-SIMD performance is usually 2x higher than Intel’s. With optimisations we are confident the score will only improve – while Intel is pretty much fully optimised and unlikely to extract more performance. | ||||||

|

||||||

|

Price/RRP (USD) | $146 [1/2x] | $55 (whole SBC) | $281 | $297 | SQ is 1/2 price of Intel’s CPUs. |

|

||||||

|

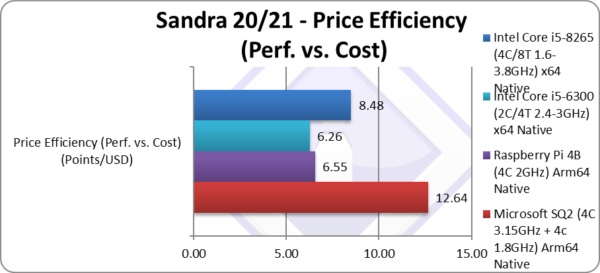

Price Efficiency (Perf. vs. Cost) (Points/USD) | 12.64 [+49%] | 6.55 | 6.26 | 8.48 | SQ is 50% better value. |

| Going by RRP SoC prices, SQ ends up 50% better value as despite lower performance it is half the price of Intel’s SoCs! This should translate in much cheaper tablet or better specs which is something we all want! | ||||||

|

||||||

|

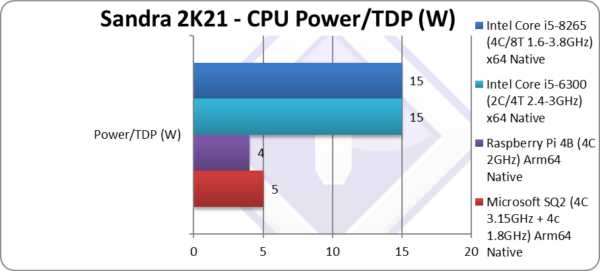

Power/TDP (W) | 5-7W [1/3x] | 4W | 15-25W | 15-25W | SQ is a third (1/3x) the power of Intel’s Core. |

|

||||||

|

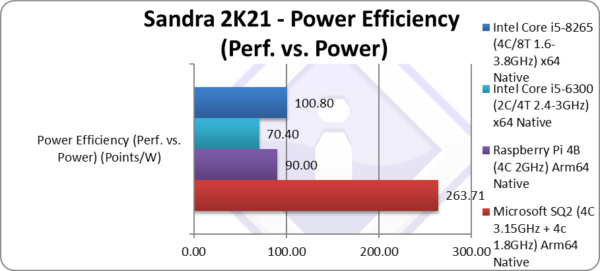

Power Efficiency (Perf. vs. Power) (W) | 263 [+2.6x] | 90 | 70.40 | 100 | SQ is over 2.5x better power efficiency |

| SQ’s power usage is so low (~5W) that it is at least 2.5x better power efficient than Intel’s designs – and we’re even ignoring that in Turbo Intel consumes even more thus the power efficiency is stellar. | ||||||

SiSoftware Official Ranker Scores

- Microsoft SQ2 (Microsoft Surface X)

- Raspberry Pi 4 Model B (Broadcom BCM2711)

- Raspberry Pi 3 Model B+ (Broadcom BCM2837)

- Apple Silicon on Parallels VM

Is x86 Dead?

For tablet/mobile – it seems so… Apple knew something when they abandoned x86 for Arm…

ARM is moving very quickly, unlike x86, where despite introducing new technologies (e.g. hybrid aka big/LITTLE) seems to go one step forward, one step back (AVX512 killed). Microsoft’s SQ shows what a relatively modern Arm64 SoC can do at far less power – and using the relatively old Cortex A76 cores, not the very latest X1/X2 ARMv9 designs.

The only weakness – SIMD vectorised performance – is not that far behind (50% aka 1/2x) and is already being addressed by SVE/SVE2 in the newest cores. SVE offers even wider SIMD registers (up to 2048-bit) – while Intel has recently canned AVX512 (512-bit) and is left with old AVX2/FMA3 (256-bit). Still, software for tablet/mobile won’t be using compute-heavy SIMD workloads anyway – with such workloads either offloaded to the Cloud or perhaps the integrated GP-GPU (Adreno here). But for performance desktops?

The power (TDP) difference is so stark (5W vs. 15-25W on Intel) that it should be possible to double the number of Arm64 cores (e.g. 8 big + 8 LITTLE) and still consume less power (e.g. 10-15W) than the competition. We really need a SoC like Apple’s M1 (or better) that can rival higher compute power devices.

Final Thoughts / Conclusions

Surface Pro X: Remarkable Arm64 Device: 9/10!

Microsoft has long provided Arm devices – from Windows CE, Windows Mobile/Phone and finally Windows RT tablets – but like Apple has finally “seen the light” and provided an attractive, powerful, unrestricted device (i.e. using full Windows 10/11 Pro). It is definitely the best entry into the series and has done well so far.

The performance of Microsoft’s SQ is definitely up there with recent Intel Core i5 ULV CPUs when running native Arm64 applications – which sadly are not many of… yet! But with developer support, their numbers are increasing quickly – with all big names either shipping or soon-t0-ship native applications. Even small ISVs like ourselves (and many others) have provided native Arm64 versions of popular utilities. “If you build it, they will come…”

SIMD performance is weaker at 33-50% slower (1/2x) but less of a problem on tablet/mobile – unlike say desktop/server. Such workloads are today commonly offloaded to the GP-GPU that while internal (not discrete) is still more powerful than most CPUs.

The WoW (Windows-on-Windows) emulation of non-native applications (x86/x64) is somewhat limited than, say, Apple’s. It is about slower 50% (1/2x) than native Arm64 and only supports 32-bit x86 applications, while most software today is provided as 64-bit x64. Microsoft really needs to improve this and quickly…

The power usage is so low that the power efficiency (performance vs. power) is much higher than the competition and thus allows for far longer battery life – which on a tablet is likely much more important than raw power. In this is true for you – then an Arm64 Surface Pro X is what you need.

One thing to remember – is that firmware and driver updates are crucial; Microsoft has pretty good track record here with Surface Pro, although still lacking compared to say enterprise/business OEMs like Dell, HP, Lenovo and this will determine how much of a future ARM Windows tablets do have. Here Intel used to have a great track record until they decided to support only 2 generation old products.

Hopefully, we will now see good value but well-spec’d tablets from other OEMs (Dell, HP, etc.) that are perhaps better spec’d than the existing ones (e.g. Samsung with Galaxy Book, Lenovo). We are eagerly waiting to see…

Surface Pro X: Remarkable Arm64 Device: 9/10!

Further Articles

Please see our other articles on:

- Windows Arm64 WoW – x86 Emulation Performance

- Windows Arm64 on Qualcomm Snapdragon 7c Performance

- Raspberry Pi 4B Review: Windows Arm64 on Broadcom BCM2711

- Raspberry Pi 3B+ Review: Windows Arm64 on Broadcom BCM2837

- Crypto-processor (TPM) Benchmarking: Discrete vs. internal AMD, Intel, Microsoft HV

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. Microsoft, etc.). All trademarks acknowledged and used for identification only under fair use.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!