What is “WoW”?

“WoW” stands for “Windows-on-Windows” is the compatibility/emulation layer that allows the execution of different architecture applications on the same version of Windows. Originally (in the days of Windows NT) it allowed running 16-bit x86 legacy applications on 32-bit x86 Windows NT. Today, with 64-bit Windows, it allows 32-bit legacy applications to run. [Note that kernel drivers always needed to be native, there has never been any emulation for drivers.]

In addition, different architecture Windows – e.g. 64-bit IA64/Itanium also allowed running 32-bit x86 applications and in the same vein, Arm64 Windows also allows 32-bit x86 applications to run. [We also had Alpha A64 in the NT4 days but that’s too far back]

Similarly, other operating systems like Apple Mac (OS X now) with “Rosetta” can run different architecture applications. Recently, Apple has dropped Intel in favour of ARM (after dropping PowerPC in favour of Intel) and has a similar compatibility layer for x86 to Arm64 translation. Although, since they went “all the way” – it seems they have done a more complete job…

What application types can Arm64 Windows emulate under WoW?

Current Arm64 Windows 10/11 can run the following types under WoW emulation:

- 32-bit ARM (ARMv7 AArch32) applications – YES

- 32-bit x86 (IA32) applications – YES

- 64-bit x64 (AMD64/EM64T) applications – YES, Windows 11 only

- 64-bit IA64 (Itanium) applications – NO, “it’s dead Jim”!

To start – 32-bit ARM applications are supported – yes, if you happen to have a “Windows RT” copy of Microsoft Office ARM-version then it will work! And the few Metro/UWP ARM applications for Windows RT – we did not bother – will also work! Yay!

In Windows 11, 64-bit x64 applications are now supported, in addition to 32-bit x86 legacy applications that were originally supported by Windows 10. As pretty much all Windows applications today are x64 – this was an important emulation feature if Arm64 Windows is to be credible.

This (also) means emulated applications are not limited to just 2GB memory (unless using AWE to use more which is pretty rare) – but can use any amount up to CPU addressing (16-128GB). We’ve been taking this for granted for more than a decade and can be a serious issue with the large files and data sets used today (2022).

Microsoft is also using their “own” SoC called “SQ” – a rebranded Qualcomm 8cx (4 big cores + 4 LITTLE cores) SoC as found in other competing tablets (Samsung, Lenovo). The number represents the 8cx generation (SQ1 – Gen 1, SQ2 – Gen 2) with the latter bringing faster clocks, updated graphics and generally having double memory (16GB LP-DDR4X vs. 8GB).

What is “Windows Arm64”?

It is the 64-bit version of “desktop” Windows 10/11 for AArch64 ARM devices – analogous to the current x64 Windows 10/11 for Intel & AMD CPUs. While “desktop” Windows 8.x has been available for ARM (AArch32) as Windows RT – it did not allow running of non-Microsoft native Win32 applications (like Sandra) and also did not support emulation for current x86/x64 applications.

We should also recall that Windows Phone (though now dead) has always run on ARM devices and was first to unify the Windows desktop and Windows CE kernels – thus this is not a brand-new port. Windows was first ported to 64-bit with the long-dead Alpha64 (Windows NT 4) and then Itanium IA64 (Windows XP 64) which showed the versatility of the NT micro-kernel.

x86/x64 CPU Emulated Features on Arm64 WoW

The WoW emulation layer on Arm64 Windows presents a Virtual x86/x64 CPU with the following characteristics:

- Type: AMD Athlon 64 (!)

- Clock: same as Arm64 host

- Cores/Threads: same as Arm64 host (but can be restricted)

- Page Sizes: 4kB, 2MB (thus large pages available)

- L1D Cache: 16kB WT 4-way (not same as host – ouch!)

- L1I Cache: 32kB WB 4-way (not same as host – ouch!)

- L2 Cache: 512kB Adv 8-way (not same as host – ouch!)

Wow seems to be emulating an Athlon 64 in all aspects, including cache sizes – which is obsolete now; at least it is not a Pentium 4 as with Windows 10 emulation!

The cache sizes not matching the host wreak havoc with optimised code – like ours – that create buffers based on L1D/L2 cache sizes (e.g. GEMM, N-Body, neural-networks CNN/RNN) – not to mention cache bandwidth/latency measurements will be incorrect. Microsoft should really try to report the host cache sizes (L1, L2, L3) although for big/LITTLE hybrid (DynamiQ) CPUs this may be be more difficult.

Here are the feature flags that this emulated x86/x64 CPU presents (we’ve cut the ones not present:

- FPU – Integrated Co-Processor : Yes

- TSC – Time Stamp Counter : Yes

- CX8 – Compare and Exchange 8-bytes Instruction : Yes

- CMOV – Conditional Move Instruction : Yes

- MMX – Multi-Media eXtensions : Yes (!)

- FXSR – Fast Float Save and Restore : Yes

- SSE – Streaming SIMD Extensions : Yes

- SSE2 – Streaming SIMD Extensions v2 : Yes

- HTT – Hyper-Threading Technology : Yes

- SSE3 – Streaming SIMD Extensions v3 : Yes

- SSSE3 – Supplemental SSE3 : Yes

- SSE4.1 – Streaming SIMD Extensions v4.1 : Yes

- SSE4.2 – Streaming SIMD Extensions v4.2 : Yes, Windows 11 only

- POPCNT – Pop Count : Yes

- AES – Accelerated Cryptography Support : No [TBD]

- AVX – Advanced Vector eXtensions : No

- AVX2 – Advanced Vector eXtensions v2 : No

- FMA3 – Fused Multiply-Add: No

- F16C – Half Precision Float Conversion : No

- RNG – Random Number Generator : No

Thus we have SSE2, (S)SSE3 and SSE4.1/4.2 SIMD 128-bit wide instruction sets as well as some compare/exchange and pop-count instructions. This makes sense as Arm64 NEON SIMD is 128-bit wide. We don’t have crypto AES, SHA or RNG hardware acceleration support [but note that Pi 4B host does not have them either].

It is likely Microsoft will eventually add AVX/AVX2 support for SVE-enabled Arm64 CPUs – though SVE has variable width (from 128-bit to 2,048-bit) – but this can still help performance as wider vectors reduce register dependencies as we have relatively few registers on x86/x64.

In addition, few extended (AMD) features are also supported:

- XD/NX – No-execute Page Protection : Yes

- RDTSCP – Serialised TSC : Yes

- AMD64/EM64T Technology : Yes, Windows 11 only

- 3D Now! Prefetch Technology : Yes (!)

Both page protection and 64-bit x64 support is available now. Virtualisation is supported on Qualcomm CPUs. [Pi 4 UEFI BIOS does not have required functionality]

While better than Windows 10 bare minimum x86 support – it is still somewhat lacking, with no AVX/AVX2 support that most modern Windows applications use – perhaps not directly but through numerical libraries.

Here Apple Mac “Rosetta” emulation is still more advanced – but Apple has gone all the way in and completely replaced x86 with their own Arm64 (M1+) CPUs – thus they do need good x86/x64 emulation for users to move to the new arch. Microsoft has no intention to abandon x86/x64 just yet but are just hedging their bets (after all after the “death” of Windows phone – the ARM team had to have something to do).

Changes in Sandra to support ARM

As a relatively old piece of software (launched all the way back in 1997 (!)), Sandra contains a good amount of legacy but optimised code, with the benchmarks generally written in assembler (MASM, NASM and previously YASM) for x86/x64 using various SIMD instruction sets: SSE2, SSSE3, SSE4.1/4.2, AVX/FMA3, AVX2 and finally AVX512. All this had to be translated in more generic C/C++ code using templated instrinsics implementation for both x86/x64 and ARM/Arm64.

As a result, some of the historic benchmarks in Sandra have substantially changed – with the scores changing to some extent. This cannot be helped as it ensures benchmarking fairness between x86/x64 and ARM/Arm64 going forward.

For this reason we recommend using the very latest version of Sandra and keep up with updated versions that likely fix bugs, improve performance and stability.

CPU Performance Benchmarking

In this article we test CPU core performance; please see our other articles on:

- CPU/SoC

- Microsoft Surface Pro X – Windows Arm64 on Microsoft SQ2 Performance

- Windows 10 Arm64 WoW – x86 Emulation Performance

- Windows Arm64 on Qualcomm Snapdragon 7c Performance

- Raspberry Pi 4B Review: Windows Arm64 on Broadcom BCM2711

- Raspberry Pi 3B+ 1GB Review: Windows Arm64 on Broadcom BCM2837

- Crypto-processor (TPM) Benchmarking: Discrete vs. internal AMD, Intel, Microsoft HV

Hardware Specifications

We are comparing the Microsoft/Qualcomm with Intel ULV x64 processors of similar vintage – all running current Windows 11, latest drivers.

| Specifications | Microsoft SQ2 | Raspberry Pi 4B | Intel Core i5-6300 | Intel Core i5-8265 | Comments | |

| Arch(itecture) | Kryo 495 Gold (Cortex A76) + Kryo 495 Silver (Cortex A55) 7nm | Cortex A72 16nm | Skylake-ULV (Gen6) | WhiskeyLake-ULV (Gen8) | big+LITLE cores vs. SMT | |

| Launch Date |

Q3 2020 | 2019 | Q3 2015 | Q3 2018 | Much newer design | |

| Cores (CU) / Threads (SP) | 4C + 4c (8T) | 4C / 4T | 2C / 4T | 4C / 8T | Same number of threads | |

| Rated Speed (GHz) | 1.8 | 1.5 | 2.5 | 1.5 | Similar base clock | |

| All/Single Turbo Speed (GHz) |

3.14 | 2.0 | 3.0 | 3.9 | Turbo could be higher | |

| Rated/Turbo Power (W) |

5-7 | 4-6 | 15-25 | 15-25 | Much lower TDP, 1/3x Intel | |

| L1D / L1I Caches | 4x 64kB | 4x 64kB | 4x 32kB 2-way | 4x 48kB 3-way | 2x 32kB | 2x 32kB | 4x 32kB | 4x 32kB | Similar L1 caches | |

| L2 Caches | 4x 512kB | 4x 128kB | 1MB 16-way | 2x 256kB | 4x 256kB | L2 is 2x on big cores | |

| L3 Cache(s) | 4MB | n/a | 3MB | 4MB | Same size L3 | |

| Microcode (Firmware) | Updates keep on coming | |||||

| Special Instruction Sets |

v8.2-A, VFP4, AES, SHA, TZ, Neon | v8-A, VFP4, TZ, Neon | AVX2/FMA, AES, VT-d/x | AVX2/FMA, AES, VT-d/x | All 8.2 instructions | |

| SIMD Width / Units |

128-bit | 128-bit | 256-bit | 256-bit | Neon is not wide enough | |

| Price / RRP (USD) |

$146 | $55 (whole SBC) | $281 | $297 | Pi price is for whole BMC! (including memory) | |

Disclaimer

This is an independent article that has not been endorsed nor sponsored by any entity (e.g. Microsoft, Qualcomm, Intel, etc.). All trademarks acknowledged and used for identification only under fair use.

The article contains only public information (available elsewhere on the Internet) and not provided under NDA nor embargoed.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!

Native Performance

We are testing native and emulated arithmetic, SIMD and cryptography performance using the highest performing instruction sets, on both x64 and Arm64 platforms.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 11 x64/Arm64, latest drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations where supported.

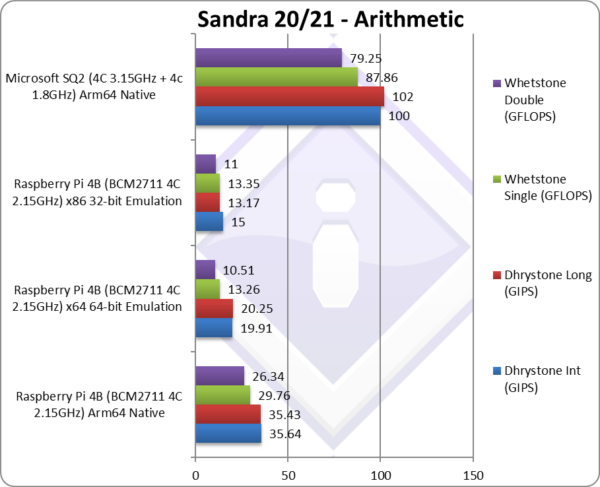

| Benchmarks | Raspberry Pi 4B Arm64 Native | Raspberry Pi 4B x64 64-bit Emulated |

Raspberry Pi 4B x86 32-bit Emulated | Microsoft SQ2 Arm64 Native | Comments | |

|

||||||

|

Native Dhrystone Integer (GIPS) | 35.64 | 19.91* [-45%] | 15* | 100 | x64 emulation is about 45% slower. |

|

Native Dhrystone Long (GIPS) | 35.43 | 20.25* [-43%] | 13.17* | 102 | A 64-bit int workload, 43% slower |

|

Native FP32 (Float) Whetstone (GFLOPS) | 29.76 | 13.26** [-56%] | 13.35** | 87.86 | With floating-point, emulation is 56% slower |

|

Native FP64 (Double) Whetstone (GFLOPS) | 26.34 | 10.51** [-60%] | 11** | 79.25 | With FP64 emulation is 60 slower. |

| In these standard legacy tests, x64 emulation is about 44% slower on integer and 55% slower on floating-point. While integer x64 is about 25% faster than x86, floating-point is about the same if not a little slower.

Thus, by supporting x64 emulation, Windows 11 will be faster running legacy applications on Arm64 than Windows 10 but still not a patch on native code – the overhead still rather large. You need a powerful CPU like SQ series on Surface X for good emulation speed, otherwise things will feel pretty slow. Note*: using SSE4, SSE2-3 SIMD processing. |

||||||

|

||||||

|

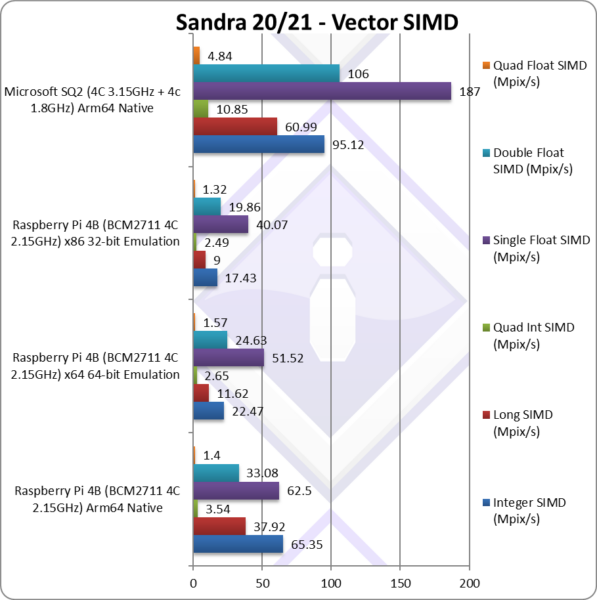

Native Integer (Int32) Multi-Media (Mpix/s) | 65.35** | 22.47* [-66%] | 17.43* | 95.12** | x64 emulation is 66% slower. |

|

Native Long (Int64) Multi-Media (Mpix/s) | 37.92** | 11.62* [-70%] | 9* | 60.99** | With a 64-bit, we are 70% slower! |

|

Native Quad-Int (Int128) Multi-Media (Mpix/s) | 3.54** | 2.65* [-26%] | 2.49* | 10.85** | Using 64-bit int to emulate Int128, we are 26% slower. |

|

Native Float/FP32 Multi-Media (Mpix/s) | 62.5** | 51.52* [-18%] | 40.07* | 187** | In this FP32 vectorised is 18% slower. |

|

Native Double/FP64 Multi-Media (Mpix/s) | 33.08** | 24.63* [-26%] | 19.86* | 106** | Switching to FP64 26% slower. |

|

Native Quad-Float/FP128 Multi-Media (Mpix/s) | 1.4** | 1.57* [+14%] | 1.32* | 4.84** | Using FP64 to mantissa extend FP128 is 14% faster! |

| With heavily vectorised SIMD workloads – the emulation degradation is more variable 18-70% lower, but on average we are about 40% lower as we’ve seen before. Again, powerful multi-core Arm64 CPUs can manage this performance drop but low-end devices (like the Pi 4B) struggling a bit more.

Again, x64 emulation can be 30% faster than x86 – thus again Windows 11 will perform better emulating x64 than Windows 10 and x86 emulation. You are pretty much forced to upgrade to Windows 11 for decent performance. Note*: using SSE2-4 128-bit SIMD processing. Note**: using NEON 128-bit SIMD processing. |

||||||

|

||||||

|

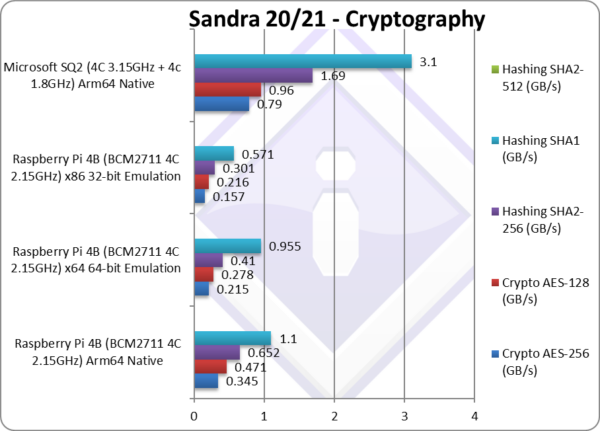

Crypto AES-256 (GB/s) | 0.345 | 0.215 [-38%] | 0.157 | 0.79 | AES is about 40% slower vs. native. |

|

Crypto AES-128 (GB/s) | 0.471 | 0.278 [-41%] | 0.216 | 0.96 | No change with AES128, 40% slower. |

|

Crypto SHA2-256 (GB/s) | 0.652** | 0.41*** [-38%] | 0.301 | 1.69** | We need SHA acceleration here. |

|

Crypto SHA1 (GB/s) | 1.1** | 0.995*** [-14%] | 0.571 | 3.1** | Less compute intensive SHA1 is 14% slower. |

| Across both AES and SHA without hardware acceleration (HWA) we see the same 40% performance degradation we’ve seen before. But it is about 30% faster than before thus a legacy software VPN should perform better if you are forced to use such a thing.

Windows 11 x64 emulation allows us to use multi-buffer hashing with SSE4 which improves performance by 70% over x86 single-buffer. But these are low numbers compared to how hardware-accelerated code (either AES or SHA) performs. Note*: using AES HWA (hardware acceleration). Note**: using NEON multi-buffer hashing. |

||||||

|

||||||

|

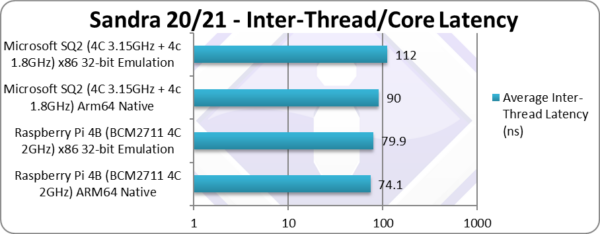

Inter-Module (CCX) Latency (Same Package) (ns) | 64.2 | 69.6 [+8%] | 65.4 | 90 | Latencies rise 8% vs. native code. |

| With big and LITTLE core clusters, the inter-core latencies vary greatly between big-2-big, LITTLE-2-LITTLE and big-2-LITTLE cores. Here we present the overall inter-core latencies of all latencies. Judicious thread scheduling is needed so that data transfers are efficient.

x64 emulation only adds about 8% latency as we’re using the same operating system API for thread synchronization. Strangely, x86 has much lower latency – similar to native code. |

||||||

|

||||||

|

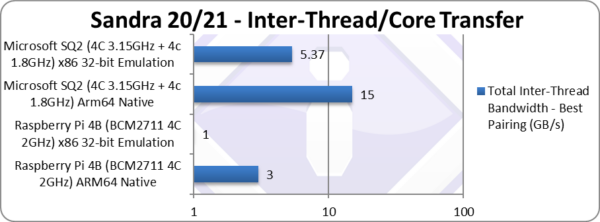

Total Inter-Thread Bandwidth – Best Pairing (GB/s) | 3.46** | 2* [-43%] | 2.23* | 15** | Bandwidth drops to 40% native. |

| As with latencies, inter-core bandwidths vary greatly between big-2-big, LITTLE-2-LITTLE and big-2-LITTLE cores, here we present the aggregate bandwidth between all cores. Judicious thread scheduling is needed so that data transfers are efficient.

Bandwidth drop is the same 40% degradation we’ve seen before, with x86 again showing better performance. It is not a deal-breaker, but we were expecting better performance from cache/memory emulating instructions. Note:* using SSE2 128-bit wide transfers. Note**: using NEON 128-bit wide transfers. |

||||||

|

||||||

|

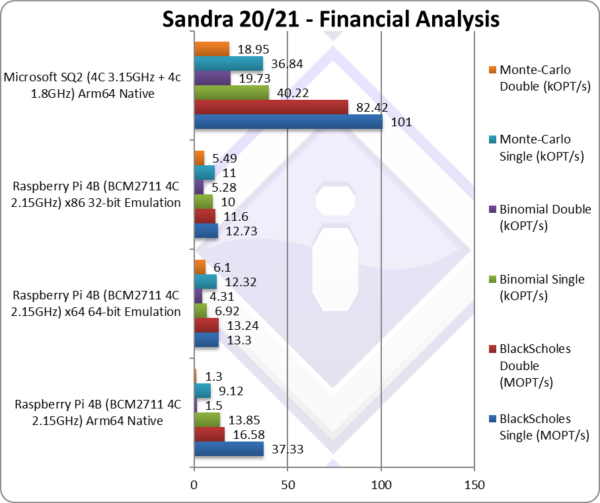

Black-Scholes float/FP32 (MOPT/s) | 37.33 | 13.3 [-1/3x] | 12.73 | 101 | Black-scholes is un-vectorised and compute heavy. |

|

Black-Scholes double/FP64 (MOPT/s) | 16.58 | 13.24 [-21%] | 11.6 | 82.42 | Using FP64, we have 21% drop vs. native. |

|

Binomial float/FP32 (kOPT/s) | 13.85 | 6.92 [-1/2x] | 10 | 40.22 | Binomial uses thread shared data thus stresses the cache & memory system. |

|

Binomial double/FP64 (kOPT/s) | 1.5 | 4.31 [+2.9x] | 5.28 | 19.73 | With FP64, emulation is 3x faster! |

|

Monte-Carlo float/FP32 (kOPT/s) | 9.12 | 12.32 [+35%] | 11 | 36.84 | Monte-Carlo also uses thread shared data but read-only thus reducing modify pressure on the caches. |

|

Monte-Carlo double/FP64 (kOPT/s) | 1.3 | 6.1 [+5x] | 5.49 | 18.95 | Switching to FP64, we’re actually 5x faster! |

| With non-SIMD financial workloads, we actually see better emulated performance – with some FP64 tests running 3x-5x faster! As we use a lot of library mathematical functions (exp, pow, etc.) this seems to be some optimisation rather than standard performance gains. Still, it shows that some financial software may actually run faster depending on how the mathematical libraries are emulated. | ||||||

|

||||||

|

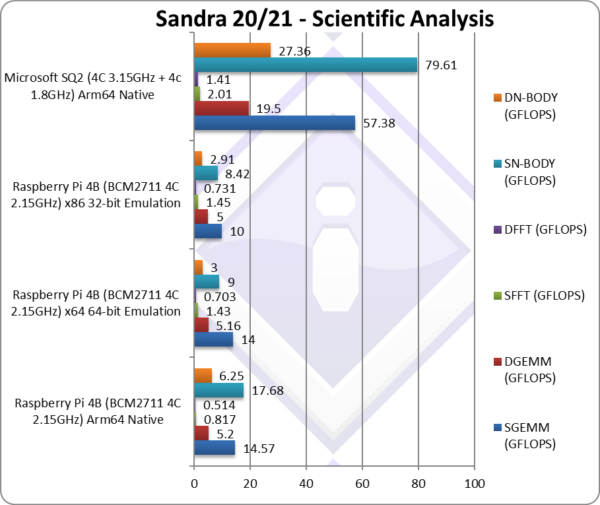

SGEMM (GFLOPS) float/FP32 | 14.57* | 14** [-4%] | 10** | 57.38* | In this tough vectorised algorithm we get a 4% drop. |

|

DGEMM (GFLOPS) double/FP64 | 5.2* | 5.16** [-1%] | 5** | 19.5* | With FP64 vectorised code, we’re only 1% slower. |

|

SFFT (GFLOPS) float/FP32 | 0.817* | 1.43** [+75%] | 1.45** | 2.01* | FFT is also heavily vectorised but memory dependent. |

|

DFFT (GFLOPS) double/FP64 | 0.514* | 0.703** [+36%] | 0.731** | 1.41* | With FP64 code, we’re 36% faster! |

|

SN-BODY (GFLOPS) float/FP32 | 17.68* | 9** [-1/2x] | 8.42** | 79.61* | N-Body simulation is vectorised but with more memory accesses. |

|

DN-BODY (GFLOPS) double/FP64 | 6.25* | 3** [-1/2x] | 2.91** | 27.36* | With FP64 we’re 52% slower. |

| With highly vectorised SIMD code (scientific workloads), we see varying performance delta: GEMM is about the same performance, FFT (which is memory latency bound) actually runs faster (!) while N-Body takes a 50% performance hit. Overall we end up level.

Note*: using SSE2-4 128-bit SIMD. Note**: using NEON 128-bit SIMD. |

||||||

|

||||||

|

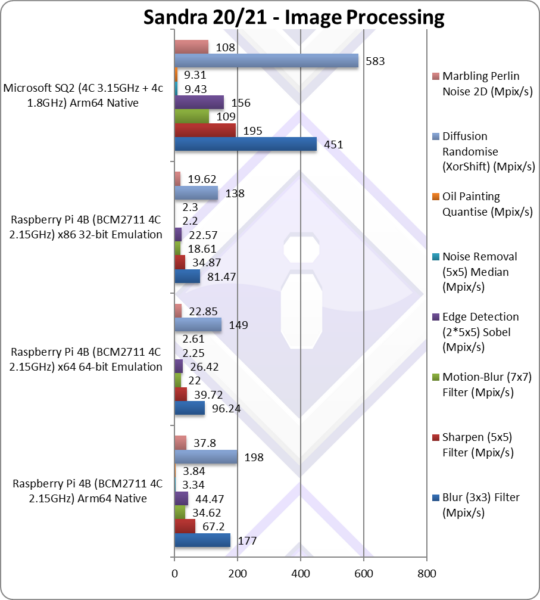

Blur (3×3) Filter (MPix/s) | 177** | 96.24* [-46%] | 81.47* | 451** | In this vectorised integer workload we’re 46% slower. |

|

Sharpen (5×5) Filter (MPix/s) | 67.2** | 39.72* [-41%] | 34.87* | 195** | Same algorithm but more shared data 41% slower. |

|

Motion-Blur (7×7) Filter (MPix/s) | 34.62** | 22* [-37%] | 18.61* | 109** | Again same algorithm but even more data, 37% slower |

|

Edge Detection (2*5×5) Sobel Filter (MPix/s) | 44.47** | 26.42* [-41%] | 22.57* | 156** | Different algorithm but still vectorised 41% slower |

|

Noise Removal (5×5) Median Filter (MPix/s) | 3.34** | 2.25* [-33%] | 2.2* | 9.43** | Still vectorised code 33% slower emulation |

|

Oil Painting Quantise Filter (MPix/s) | 3.84** | 2.61* [-33%] | 2.3* | 9.31** | In this tough filter, we’re 33% slower. |

|

Diffusion Randomise (XorShift) Filter (MPix/s) | 198** | 149* [-25%] | 138* | 583** | With 64-bit integer workload, we’re 25% slower |

|

Marbling Perlin Noise 2D Filter (MPix/s) | 37.8** | 22.85* [-40%] | 19.62* | 108** | In this final test (scatter/gather) we’re 40% slower. |

| We know these benchmarks *love* SIMD, with wider SIMD (e.g. AVX512, AVX2) performing strongly – but we still see the same 30-50% performance degradation for x64 emulation, about 37% overall.

Again, as with other compute-heavy algorithms – these days such algorithms would be offloaded to the Cloud or locally to the GP-GPU – thus the CPU does not need to be a SIMD speed demon. Note*: using SSE4 128-bit SIMD. Note**: using NEON 128-bit SIMD. |

||||||

|

||||||

|

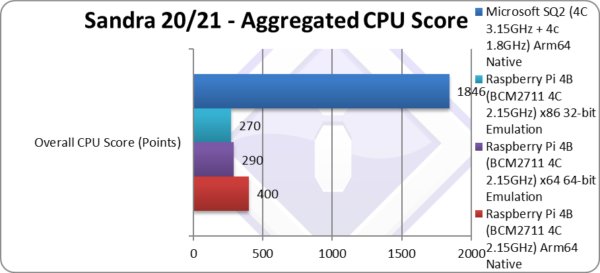

Aggregate Score (Points) | 400 | 290 [28%] | 270 | 1,846 | Across all benchmarks, emulation is 28% slower |

| We see x64 emulation -30% slower across all benchmarks, thus effectively cutting performance by a third (1/3x). However, this is better than x86 emulation which cuts performance by almost a half (1/5x). Thus, in addition to supporting modern Windows applications (now x64) and increased memory (64-bit) for workloads – performance has also improved by a decent amount. | ||||||

|

||||||

|

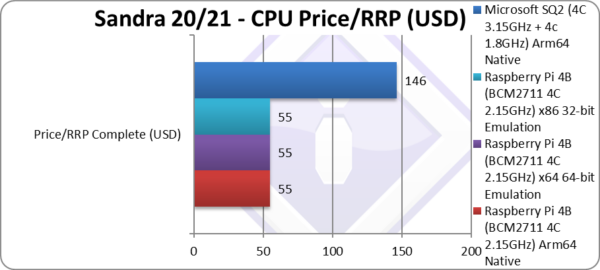

Price/RRP (USD) | $55 (whole SBC) | $55 (whole SBC) | $55 (whole SBC) | $146 | Price does not change. |

|

||||||

|

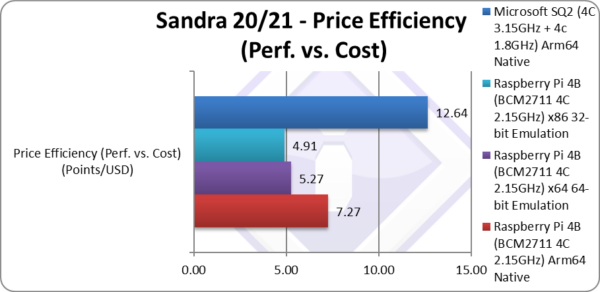

Price Efficiency (Perf. vs. Cost) (Points/USD) | 7.27 | 5.27 [-28%] | 4.91 | 12.64 | Naturally efficiency drops by 28%. |

| Unsurprisingly, value for money (price efficiency) drops by the same percentage, 30%. This does matter, as this puts it right into Intel x86 territory – in effect losing the price efficiency advantage over competition. This may also translate in higher software cost, e.g. to get the native Arm64 version of software may require upgrading to a newer version at extra cost… | ||||||

|

||||||

|

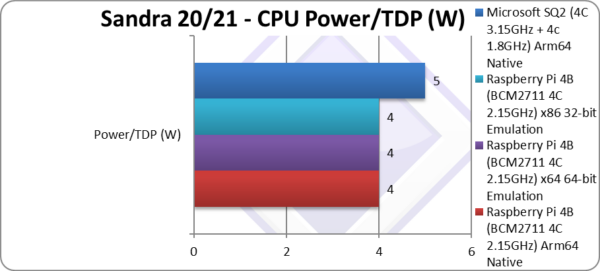

Power/TDP (W) | 5W | 5W | 5W | 5W | Power does not change |

|

||||||

|

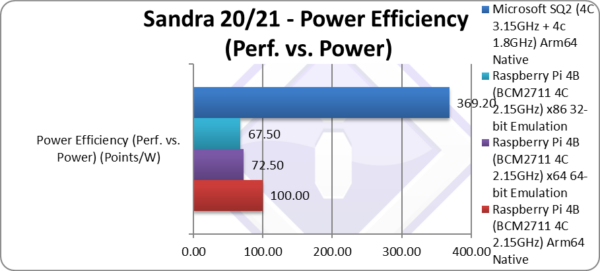

Power Efficiency (Perf. vs. Power) (W) | 100 | 72.50 [-28%] | 67.50 | 369 | Naturally efficiency drops by the same 28%. |

| While Arm64 power usage is low, by cutting performance by third (1/3x) due to x64 emulation, the power efficiency is no longer as high vs. Intel x86 native designs. Arm64 still has an advantage but is only about 50% now rather than the “crushing” 2.5x better as with native code. | ||||||

SiSoftware Official Ranker Scores

- Microsoft SQ2 (Microsoft Surface X)

- Raspberry Pi 4 Model B (Broadcom BCM2711)

- Raspberry Pi 3 Model B+ (Broadcom BCM2837)

- Apple Silicon on Parallels VM

Final Thoughts / Conclusions

x64/x86 Emulation on Arm64: Now Decent: 8/10

Windows 11 emulation has been improved, with x64 support finally allowing modern Windows applications to run on Arm64. Now, you can at last run all your applications – save some that include kernel drivers (e.g. drivers, anti-virus, utilities, some VPN, etc.).

The main issue is that it is not much faster – we still see a performance degradation of about -30% (Arm64 native code vs. x64 emulated code) which needs to be improved. As most Arm64 designs are tablets (e.g. Microsoft Surface X) or thin & light notebooks (e.g. Samsung Galaxy Book Go) – the CPUs in these devices are not very compute powerful to start with.

The hybrid “big.LITTLE” (DynamiQ) technology that ARM has been deployed for many years – and Intel has recently “copied” with Hybrid “AlderLake” (ADL) – also provides more efficient power utilisation at low compute workloads (I/O, etc.) and thus further reducing energy usage. For tablets or netbooks (aka small laptops) this is an important metric.

With the good performance of Microsoft’s SQ SoC (and similar Qualcomm 7c, 8cx, etc.) and fantastic power efficiency (typically 5W TDP) vs. the current Intel ULV offerings (15W TDP – 25W Turbo) – Arm64 emulation of x86/x64 can be more efficient than native execution! [internally, all modern Intel CPUs translate x86/x64 into native microcode/RISC thus they are not exactly native x86 anymore]

The performance/price efficiency (which depends entirely on the whole package not just the SoC) is no longer (much) better for emulation and comparable to native Intel. So if you will just be running x86/x64 emulated and not native Arm64 you may be better off with Intel… Note that getting Arm64 versions of software may mean upgrading/updating at a cost – not all ISVs will provide free updates if at all.

In summary, now x86/x64 emulation is decent but still needs improving – but this should not stop you for trying a new Arm64 tablet/laptop. Hopefully we will now see good value but well-spec’d tablets from other OEMs (Dell, HP, etc.) that are perhaps better spec’d than the existing ones (e.g. Samsung with Galaxy Book, Lenovo). We are eagerly waiting to see…

x64/x86 Emulation on Arm64: Now Decent: 8/10

Further Articles

Please see our other articles on:

- CPU/SoC

- Microsoft Surface Pro X – Windows Arm64 on Microsoft SQ2 Performance

- Windows 10 Arm64 WoW – x86 Emulation Performance

- Windows Arm64 on Qualcomm Snapdragon 7c Performance

- Raspberry Pi 4B Review: Windows Arm64 on Broadcom BCM2711

- Raspberry Pi 3B+ 1GB Review: Windows Arm64 on Broadcom BCM2837

- Crypto-processor (TPM) Benchmarking: Discrete vs. internal AMD, Intel, Microsoft HV

Disclaimer

This is an independent article that has not been endorsed nor sponsored by any entity (e.g. Microsoft, Qualcomm, Intel, etc.). All trademarks acknowledged and used for identification only under fair use.

The article contains only public information (available elsewhere on the Internet) and not provided under NDA nor embargoed.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!