What is “IceLake”?

It is the “real” 10th generation Core arch(itecture) (ICL/”IceLake”) from Intel – the brand new core to replace the ageing “Skylake” (SKL) arch and its many derivatives; due to delays it actually debuts shortly after the latest update (“CometLake” (CLM)) that is also called 10th generation. Firstly launched for mobile ULV (U/Y) devices, it will also be launched for mainstream (desktop/workstations) soon.

Thus it contains extensive changes to all parts of the SoC: CPU, GPU, memory controller:

- 10nm+ process (lower voltage, higher performance benefits)

- Up to 4C/8T “Sunny Cove” cores on ULV (less than top-end CometLake 6C/12T)

- Gen11 graphics (finally up from Gen9.5 for CometLake/WhiskyLake)

- AVX512 instruction set (like HEDT platform)

- SHA HWA instruction set (like Ryzen)

- 2-channel LP-DDR4X support up to 3733Mt/s

- Thunderbolt 3 integrated

- Hardware fixes/mitigations for vulnerabilities (“Meltdown”, “MDS”, various “Spectre” types)

- WiFi6 (802.11ax) AX201 integrated

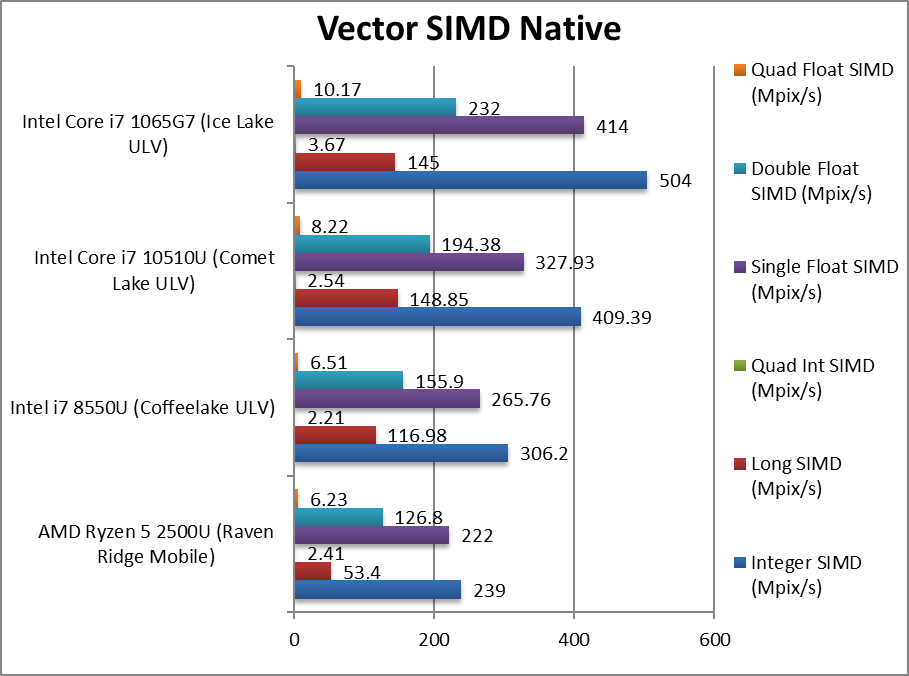

Probably the biggest change is support for AVX512-family instruction set, effectively doubling the SIMD processing width (vs. AVX2/FMA) as well as adding a whole host of specialised instructions that even the HEDT platform (SKL/KBL-X) does not support:

- AVX512-VNNI (Vector Neural Network Instructions)

- AVX512-VBMI, VBMI2 (Vector Byte Manipulation Instructions)

- AVX512-BITALG (Bit Algorithms)

- AVX512-AVX512-IFMA (Integer FMA)

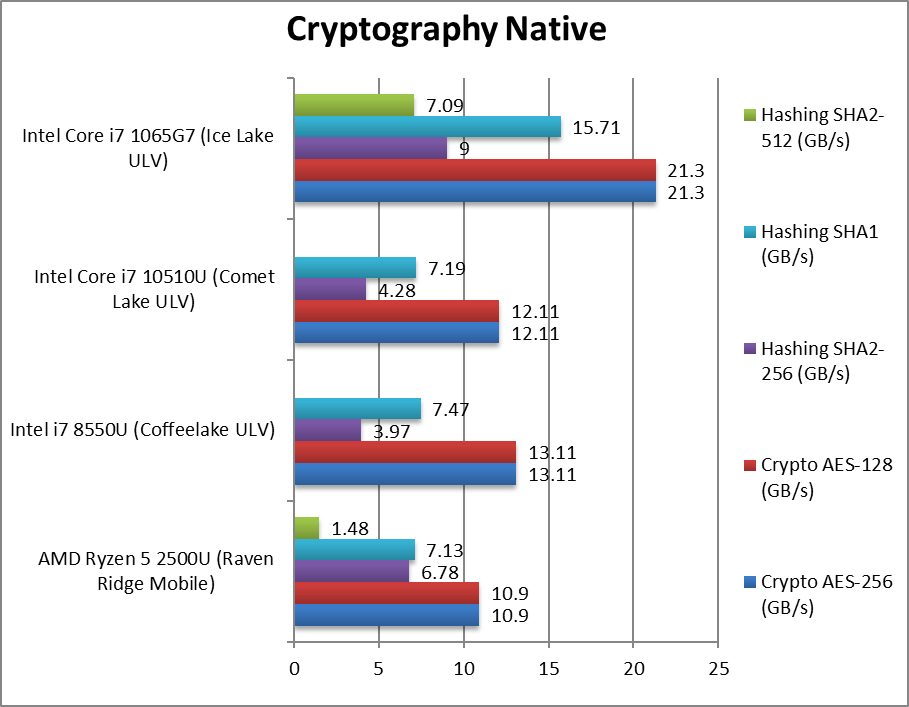

- AVX512-VAES (Vector AES) accelerating crypto

- AVX512-GFNI (Galois Field)

- SHA HWA accelerating hashing

- AVX512-GNA (Gaussian Neural Accelerator)

While some software may not have been updated to AVX512 as it was reserved for HEDT/Servers, due to this mainstream launch you can pretty much guarantee that just about all vectorised algorithms (already ported to AVX2/FMA) will soon be ported over. VNNI, IFMA support can accelerate low-precision neural-networks that are likely to be used on mobile platforms.

VAES and SHA acceleration improve crypto/hashing performance – important today as even LAN transfers between workstations are likely to be encrypted/signed, not to mention just about all WAN transfers, encrypted disk/containers, etc. Some SoCs will also make their way into powerful (but low power) firewall appliances where both AES and SHA acceleration will prove very useful.

From a security point-of-view, ICL mitigates all (existing/reported) vulnerabilities in hardware/firmware (Spectre 2, 3/a, 4; L1TF, MDS) except BCB (Spectre V1 that does not have a hardware solution) thus should not require slower mitigations that affect performance (especially I/O).

The memory controller supports LP-DDR4X at higher speeds than CML while the cache/TLB systems have been improved that should help both CPU and GPU performance (see corresponding article) as well as reduce power vs. older designs using LP-DDR3.

Finally the GPU core has been updated (Gen11) and generally contains many more cores than the old core (Gen9.5) that was used from KBL (CPU Gen7) all the way to CML (CPU Gen10) (see corresponding article).

CPU (Core) Performance Benchmarking

In this article we test CPU core performance; please see our other articles on:

- Intel Core Gen11 TigerLake ULV (i7-1165G7) Review & Benchmarks – CPU AVX512 Performance

- Intel Iris Plus G7 Gen12 XE TigerLake ULV (i7-1165G7) Review & Benchmarks – GPGPU Performance

- AVX512-IFMA(52) Improvement for IceLake and TigerLake

- Benchmarks of JCC Erratum Mitigation – Intel CPUs

- Intel Core Gen10 IceLake ULV (i7-1065G7) Review & Benchmarks – Cache & Memory Performance

- Intel Iris Plus G7 Gen11 IceLake ULV (i7-1065G7) Review & Benchmarks – GPGPU Performance

- AVX512 Improvement for Icelake Mobile (i7-1065G7 ULV)

To compare against the other Gen10 CPU, please see our other articles:

Hardware Specifications

We are comparing the top-of-the-range Intel ULV with competing architectures (gen 8, 7, 6) as well as competiors (AMD) with a view to upgrading to a mid-range but high performance design.

| CPU Specifications | AMD Ryzen 2500U Bristol Ridge | Intel i7 8550U (Coffeelake ULV) | Intel Core i7 10510U (CometLake ULV) | Intel Core i7 1065G7 (IceLake ULV) | Comments | |

| Cores (CU) / Threads (SP) | 4C / 8T | 4C / 8T | 4C / 8T | 4C / 8T | No change in cores count. | |

| Speed (Min / Max / Turbo) | 1.6-2.0-3.6GHz | 0.4-1.8-4.0GHz (1.8 @ 15W, 2GHz @ 25W) |

0.4-1.8-4.9GHz (1.8GHz @ 15W, 2.3GHz @ 25W) |

0.4-1.5-3.9GHz (1.0GHz @ 12W, 1.5GHz @ 25W) |

ICL has lower clocks ws. CML. | |

| Power (TDP) | 15-35W | 15-35W | 15-35W | 12-35W | Same power envelope. | |

| L1D / L1I Caches | 4x 32kB 8-way / 4x 64kB 4-way | 4x 32kB 8-way / 4x 32kB 8-way | 4x 32kB 8-way / 4x 32kB 8-way | 4x 48kB 12-way / 4x 32kB 8-way | L1D is 50% larger. | |

| L2 Caches | 4x 512kB 8-way | 4x 256kB 16-way | 4x 256kB 16-way | 4x 512kB 16-way | L2 has doubled. | |

| L3 Caches | 4MB 16-way | 6MB 16-way | 8MB 16-way | 8MB 16-way | No L3 changes | |

| Microcode (Firmware) | MU8F1100-0B | MU068E09-AE | MU068E0C-BE | MU067E05-6A | Revisions just keep on coming. | |

| Special Instruction Sets |

AVX2/FMA, SHA | AVX2/FMA | AVX2/FMA | AVX512, VNNI, SHA, VAES, GFNI | 512-bit wide SIMD on mobile! | |

| SIMD Width / Units |

128-bit | 256-bit | 256-bit | 512-bit | Widest SIMD units ever | |

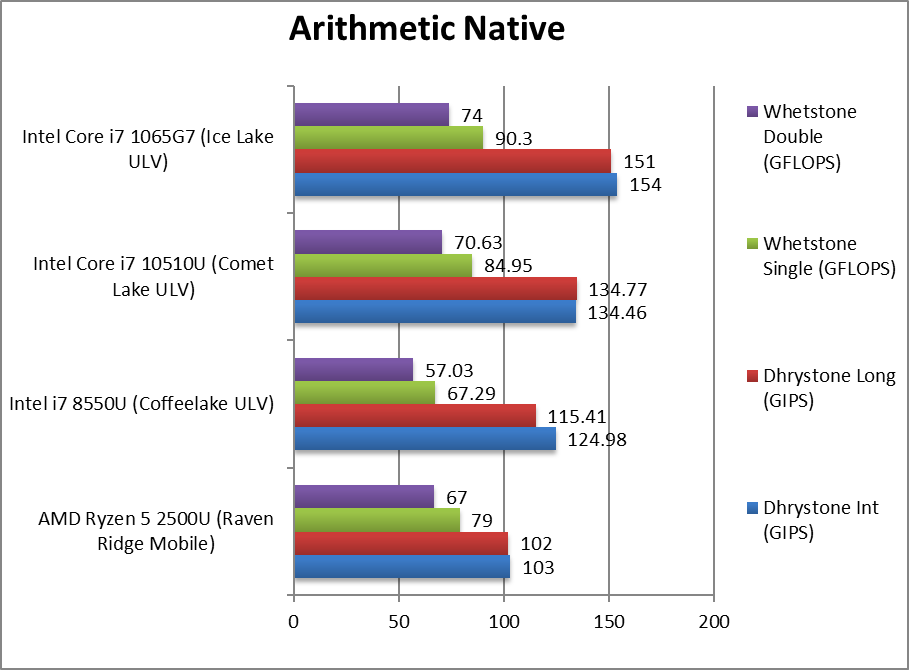

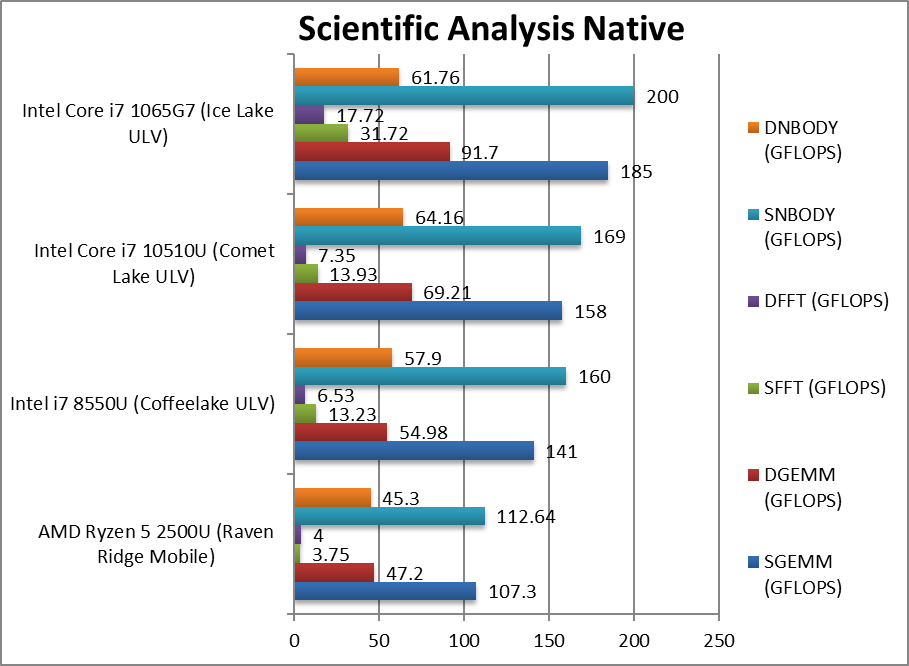

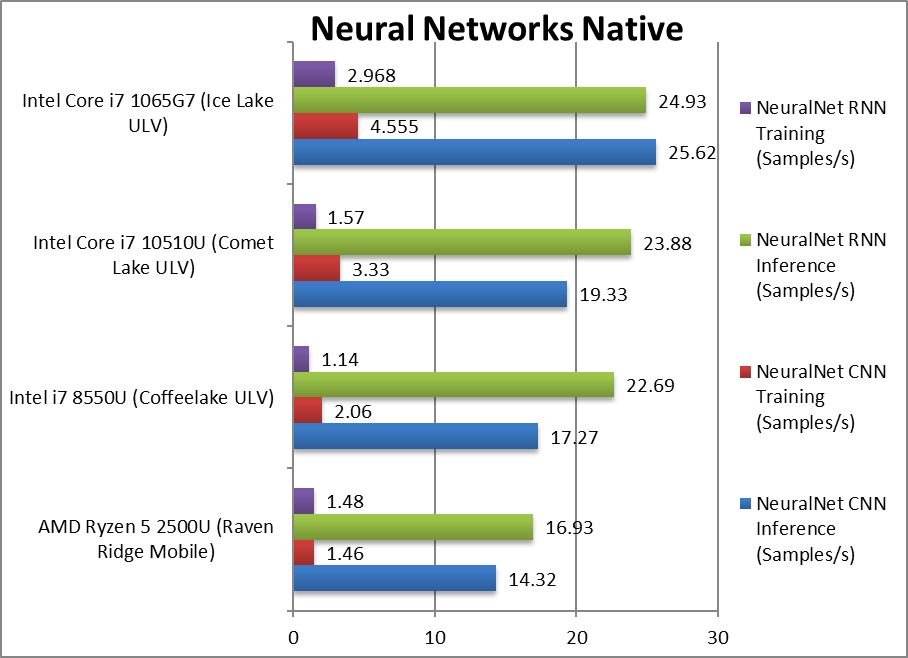

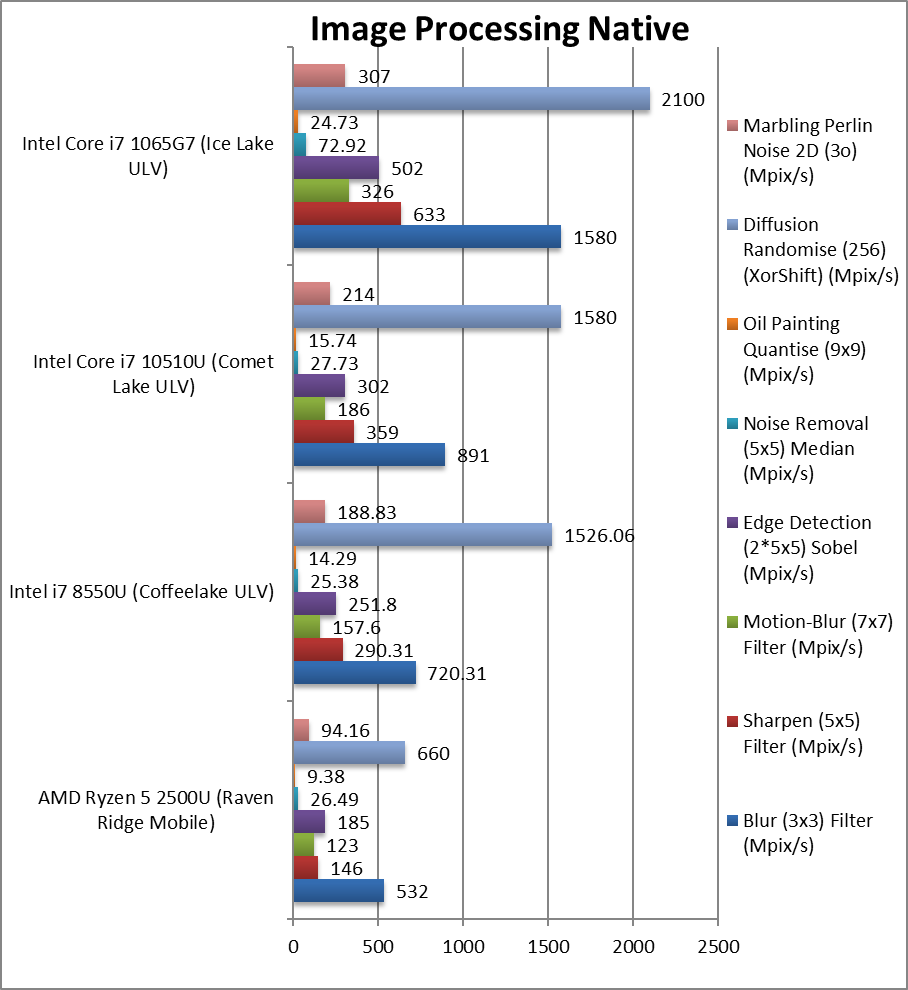

Native Performance

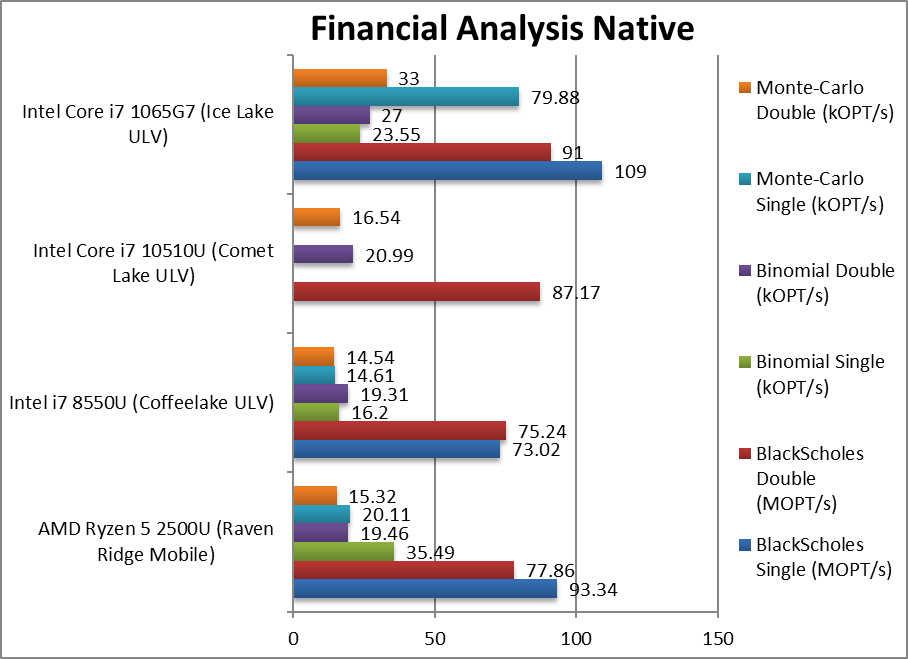

We are testing native arithmetic, SIMD and cryptography performance using the highest performing instruction sets (AVX2, AVX, etc.). “IceLake” (ICL) supports all modern instruction sets including AVX512, VNNI, SHA HWA, VAES and naturally the older AVX2/FMA, AES HWA.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 10 x64, latest AMD and Intel drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations.

Unlike CML, ICL with AVX512 support is a revolution in performance – which is exactly what we were hoping for; even at much lower clock we see anywhere between 33% all the way to over 2x (twice) faster within the same power limits (TDP/turbo). As we know from HEDT, AVX512 is power-hungry thus higher-TDP rated version (e.g. 28W) should perform even better.

Even without AVX512, we see good improvement of 5-15% again at much lower clock (3.9GHz vs 4.9GHz) while CML and older versions relied on higher clock / more cores to outperform older versions KBL/SKL-U.

SiSoftware Official Ranker Scores

Final Thoughts / Conclusions

With AMD snapping at its heel with Ryzen Mobile, Intel has finally fixed its 10nm production and rolled out the “new Skylake” we deserve: Ice Lake with AVX512 brings feature parity with the much older HEDT platform and showing good promise for the future. This is the “Core” you have been looking for.

While power-hungry and TDP constrained, AVX512 does bring sizeable performance gains that are in addition to core improvements and cache & memory sub-system improvements. Other instruction sets VAES, SHA HWA complete the package and might help in some scenarios where code has not been updated to AVX512.

With ICL, a mere 15W thin & light (e.g. Dell XPS 13 9300) can outperform older desktop-class CPUs (e.g. SKL) at 4-6x (four/six-times) TDP which makes us really keen to see what desktop-class processors will be capable of. And not before time as the competition has been bringing stronger and stronger designs (Ryzen2, future Ryzen 3).

If you have been waiting to upgrade from the much older – but still good – SKL/KBL with just 2 cores and no hardware vulnerability mitigations – then you finally have something to upgrade to: CML was not it as despite its 4 cores (and rumoured 6 core), it just did not bring enough to the table to make upgrading worth-while (save hardware mitigations that don’t cripple performance).

Overall, with GP GPU and memory improvements, ICL-U is a very compelling proposition that cost permitting should be your top choice for long-term use.

In a word: Highly Recommended!

Please see our other articles on:

- Intel Core Gen11 TigerLake ULV (i7-1165G7) Review & Benchmarks – CPU AVX512 Performance

- Intel Iris Plus G7 Gen12 XE TigerLake ULV (i7-1165G7) Review & Benchmarks – GPGPU Performance

- Benchmarks of JCC Erratum Mitigation – Intel CPUs

- Intel Core Gen10 IceLake ULV (i7-1065G7) Review & Benchmarks – Cache & Memory Performance

- Intel Iris Plus G7 Gen11 IceLake ULV (i7-1065G7) Review & Benchmarks – GPGPU Performance