What is “RaptorLake”?

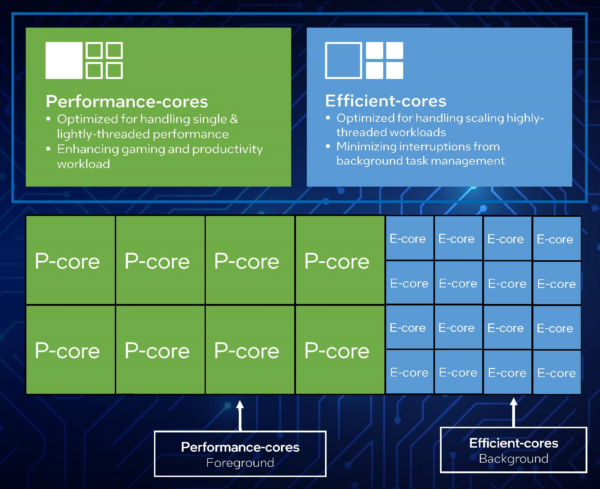

It is the “next-generation” (13th gen) Core architecture, replacing the current “AlderLake” (12th gen) and thus the 3rd generation “hybrid” (aka “big.LITTLE”) arch that Intel has released. As before it combines big/P(erformant) “Core” cores with LITTLE/E(fficient) “Atom” cores in a single package and covers everything from desktops, laptops, tablets and even (low-end) servers.

- Desktop (S) (65-125W rated, up to 253W turbo)

- 8C (aka big/P) + 16c (aka LITTLE/E) / 32T total (13th Gen Core i9-13900K(F))

- 2x as many LITTLE/E cores as ADL

- High-Performance Mobile (H/HX) (45-55W rated, up to 157W turbo)

- 8C + 16c / 32T total

- Mobile (P) (20-28W rated, up to 64W turbo)

- 4/6C + 8c / 28T total

- Ultra-Mobile/ULV (U) (9-15W rated, 29W turbo)

- 2C + 8c / 12T total

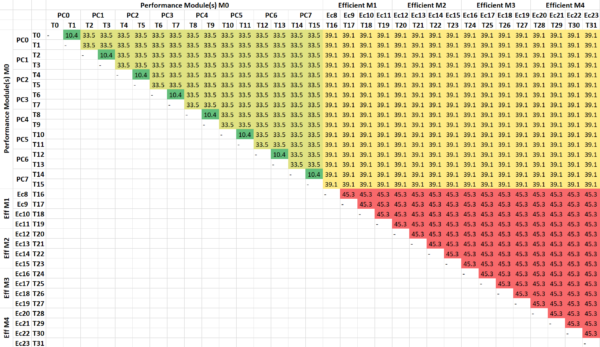

For best performance and efficiency, this does require operating system scheduler changes – in order for threads to be assigned on the appropriate physical core/thread. For compute-heavy/low-latency this means a “big/P” core; for low compute/power-limited this means a “LITTLE/E” core.

In the Windows world, this means “Windows 11” for clients and “Windows Server vNext” (note not the recently released Server 2022 based on 21H2 Windows 10 kernel) for servers. The Windows power plans (e.g. “Balanced“, “High Performance“, etc.) contain additional settings (hidden), e.g. prefer (or require) scheduling on big/P or LITTLE/E cores and so on. But in general, the scheduler is supposed to automatically handle it all based on telemetry from the CPU.

Windows 11 also gets updated QoS (Quality of Service) API (aka functions) allowing app(lications) like Sandra to indicate which threads should use big/P cores and which LITTLE/E cores. Naturally, these means updated applications will be needed for best power efficiency.

Intel Core i9 13900K(F) (RaptorLake) 8C + 16c

General SoC Details

- 10nm+++ (Intel 7+) improved process

- Unified 36MB L3 cache (vs. 30MB on ADL thus 20% larger)

- PCIe 5.0 (up to 64GB/s with x16 lanes) – up to x16 lanes PCIe5 + x4 lanes PCIe4

- NVMe SSDs may thus be limited to PCIe4 or bifurcate main x16 lanes with GPU to PCIe5 x8 + x8

- PCH up to x12 lanes PCIe4 + x16 lanes PCIe3

- CPU to PCH DMI 4 x8 link (aka PCIe4 x8)

- DDR5/LP-DDR5 memory controller support (e.g. 4x 32-bit channels) – up to 5600Mt/s (official, vs. 4800Mt/s ADL)

- XMP 3.0 (eXtreme Memory Profile(s)) specification for overclocking with 3 profiles and 2 user-writable profiles (!)

- Thunderbolt 4 (and thus USB 4)

big/P(erformance) “Core” core

- Up to 8C/16T “Raptor Cove” (!) cores – improved from “Golden Cove” in ADL 😉

- Disabled AVX512! in order to match Atom cores (on consumer)

- (Server versions ADL-EX support AVX512 and new extensions like AMX and FP16 data-format)

- Single FMA-512 unit (though disabled)

- SMT support still included, 2x threads/core – thus 16 total

- L1I remains at 32kB

- L1D remains at 48kB

- L2 increased to 2MB per core (almost 2x ADL) like server parts (ADL-EX)

LITTLE/E(fficient) “Atom” core

- Up to 16c/16T “Gracemont” cores – thus 2x more than ADL but same core

- No SMT support, only 1 thread/core – thus 16 total (in 4x modules of 4x threads)

- AVX/AVX2 support – first for Atom core, but no AVX512!

- (Recall that “Phi” GP-GPU accelerator w/AVX512 was based on Atom core)

- L1I still at 64kB

- L1D still at 32kB

- L2 4MB shared by 4 cores (2x larger than ADL)

As with ADL, RPL’s big “Raptor Cove” cores have AVX512 disabled which may prove to be a (big) problem considering AMD’s upcoming Zen4 (Ryzen 7000?) will support it. Even Centaur’s “little” CNS CPU supported AVX512. Centaur has now has been bought by Intel, possibly in order to provide a little AVX512-supporting core. We may see Intel big Core + ex-Centaur LITTLE core designs.

While some are not keen on AVX512 due to relatively large power required to use (and thus lower clocks) as well as the large number of extensions (F, BW, CD, DQ, ER, IFMA, PF, VL, BF16, FP16, VAES, VNNI, etc.) – the performance gain cannot be underestimated (2x if not higher). Most processors no longer need to “clock-down” (AVX512 negative offset) and can run at full speed – power/thermal limits notwithstanding. Now that AMD and ex-Centaur support AVX512, it is no longer an Intel-only server-only instruction set (ISA).

RPL using the very same, “Gracemont” Atom cores as ADL – with no changes except 2x larger cluster L2 (4MB vs. 2MB) which is welcome especially in light of the big cores also getting a L2 cache size upgrade. While AVX2 support for Atom cores was a huge upgrade, tests have shown them not to be as power efficient as Intel would like to make us believe – which is why RPL will have more of them but lower clocked where the efficiency is greater.

As we hypothesized in our article (Mythical Intel 12th Gen Core AlderLake 10C/20T big/P Cores (i11-12999X) – AVX512 Extrapolated Performance) – ADL would have been great if Intel could have provided a version with only 10 big cores (replacing the 2x little cores cluster) that could have been an AVX512 SIMD-performance monster, trading blows with 16-core Zen3 (Ryzen 5950X). With RPL having space for 2 extra clusters – Intel could have had 10C + 8c or even 12C (big) AVX512-supporting cores that could go against Zen4…

Alas, what we are getting across SKUs is the same number of big cores (be they 8, 6, 4 or 2) and 2x clusters of Little cores (and thus 2x more little cores) but presumably at lower clocks in order to improve power efficiency. One issue with ADL across SKUs is that while TDP (on paper) is reasonable – turbo power has blown way past even AVX512-supporting “RocketLake” (!) despite the new efficiency claims. Thus, while disappointing, it is clear Intel is trying to bring power under control.

Changes in Sandra to support Hybrid

Like Windows (and other operating systems), we have had to make extensive changes to both detection, thread scheduling and benchmarks to support hybrid/big-LITTLE. Thankfully, this means we are not dependent on Windows support – you can confidently test AlderLake on older operating systems (e.g. Windows 10 or earlier – or Server 2022/2019/2016 or earlier) – although it is probably best to run the very latest operating systems for best overall (outside benchmarking) computing experience.

- Detection Changes

- Detect big/P and LITTLE/E cores

- Detect correct number of cores (and type), modules and threads per core -> topology

- Detect correct cache sizes (L1D, L1I, L2) depending on core

- Detect multipliers depending on core

- Scheduling Changes

- “All Threads (MT/MC)” (thus all cores + all threads – e.g. 32T

- “All Cores (MC aka big+LITTLE) Only” (both core types, no threads) – thus 24T

- “All Threads big/P Cores Only” (only “Core” cores + their threads) – thus 16T

- “big/P Cores Only” (only “Core” cores) – thus 8T

- “LITTLE/E Cores Only” (only “Atom” cores) – thus 16T

- “Single Thread big/P Core Only” (thus single “Core” core) – thus 1T

- “Single Thread LITTLE/E Core Only” (thus single “Atom” core) – thus 1T

- “All Threads (MT/MC)” (thus all cores + all threads – e.g. 32T

- Benchmarking Changes

- Dynamic/Asymmetric workload allocator – based on each thread’s compute power

- Note some tests/algorithms are not well-suited for this (here P threads will finish and wait for E threads – thus effectively having only E threads). Different ways to test algorithm(s) will be needed.

- Dynamic/Asymmetric buffer sizes – based on each thread’s L1D caches

- Memory/Cache buffer testing using different block/buffer sizes for P/E threads

- Algorithms (e.g. GEMM) using different block sizes for P/E threads

- Best performance core/thread default selection – based on test type

- Some tests/algorithms run best just using cores only (SMT threads would just add overhead)

- Some tests/algorithms (streaming) run best just using big/P cores only (E cores just too slow and waste memory bandwidth)

- Some tests/algorithms sharing data run best on same type of cores only (either big/P or LITTLE/E) (sharing between different types of cores incurs higher latencies and lower bandwidth)

- Reporting the Performance Contribution & Ratio of each thread

- Thus the big/P and LITTLE/E cores contribution for each algorithm can be presented. In effect, this allows better optimisation of algorithms tested, e.g. detecting when either big/P or LITTLE/E cores are not efficiently used (e.g. overloaded)

- Dynamic/Asymmetric workload allocator – based on each thread’s compute power

As per above you can be forgiven that some developers may just restrict their software to use big/Performance threads only and just ignore the LITTLE/Efficient threads at all – at least when using compute heavy algorithms.

For this reason we recommend using the very latest version of Sandra and keep up with updated versions that likely fix bugs, improve performance and stability.

But is it RaptorLake or AlderLake-Refresh?

Unfortunately, it seems that not all CPUs labelled “13th Gen” will be “RaptorLake” (RPL); some middle-range i5 and low-range i3 models will instead come with “AlderLake” (Refresh) ADL-R cores that is likely to confuse ordinary people into buying these older-gen CPUs.

What is more confusing is that the ID (aka CPUID) of these 13th Gen ADL-R/RPL models is the same (e.g. 0B067x) and does not match the old ADL (e.g. 09067x). However, the L2 cache sizes are the same as old ADL (1.25MB for big/Core and 2MB for LITTLE/Atom cluster) not the larger RPL (2MB for big/Core and 4MB for LITTLE/Atom cluster).

Note: There is still a possibility these are actually RPL cores but with L2 cache(s) reduced (part disabled/fused off) in order not to outperform higher models.

CPU (Core) Performance Benchmarking

In this article we test CPU core performance; please see our other articles on:

- CPU

- Intel 13th Gen Core RaptorLake (i5 13600K(F)) PreView & Benchmarks – Mid-Range Hybrid

- Intel 13th Gen Core RaptorLake? AlderLake? (i5 13400) PreView & Benchmarks – Value Hybrid Efficiency

- Intel 12th Gen Core AlderLake Mobile (i7-12700H) Review & Benchmarks – big/LITTLE Performance

- big/Performance Core Performance Analysis – Intel 12th Gen Core AlderLake (i9-12900K)

- Cache & Memory

- GP-GPU

Hardware Specifications

We are comparing the Intel with competing desktop architectures as well as competitors (AMD) with a view to upgrading to a top-of-the-range, high performance design.

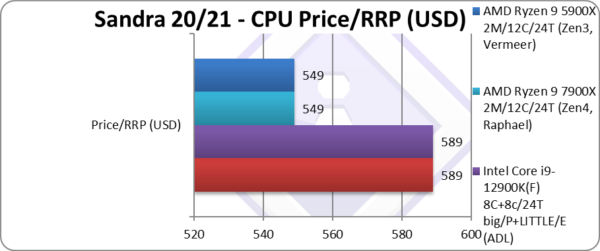

| Specifications | Intel Core i9 13900K(F) 8C+16c/32T (RPL) | Intel Core i9 12900K(F) 8C+8c/24T (ADL) | AMD Ryzen 9 7900X 2M/12C/24T (Zen4) | AMD Ryzen 9 5900X 2M/12C/24T (Zen3) | Comments | |

| Arch(itecture) | Raptor Cove + Gracemont / RaptorLake | Golden Cove + Gracemont / AlderLake | Zen4 / Raphael | Zen3 / Vermeer | The very latest arch | |

| Modules (CCX) / Cores (CU) / Threads (SP) | 8C+16c / 32T | 8C+8c / 24T | 2M / 12C / 24T | 2M / 12C / 24T | 8 more (2x) LITTLE cores! | |

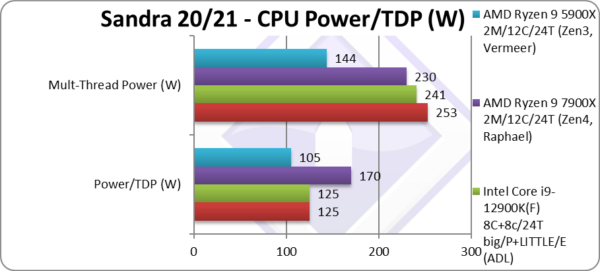

| Rated/Turbo Speed (GHz) | 3.0 – 5.8GHz [+12%] / 2.2 – 4.3GHz [+10%] |

3.2 – 5.2GHz / 2.4 – 3.9GHz | 4.7 – 5.6GHz | 3.7 – 4.8GHz | 12% big Core, 10% Atom Turbo |

|

| Rated/Turbo Power (W) |

125 – 253W [PL2][+5%] | 125 – 241W [PL2] | 170 – 230W [PTT] | 105 – 144W [PTT] |

5% higher Turbo power |

|

| L1D / L1I Caches | 8x 48/32kB + 16x 32/64kB |

8x 48/32kB + 8x 32/64kB | 12x 32kB 8-way / 12x 32kB 8-way | 12x 32kB 8-way / 12x 32kB 8-way | Same L1D/L1I caches | |

| L2 Caches | 8x 2MB + 4x 4MB (32MB) [+2.3x] |

8x 1.25MB + 2x 2MB (14MB) | 12x 1MB 16-way (12MB) | 12x 512kB 16-way (6MB) | L2 is over 2x larger! | |

| L3 Cache(s) | 36MB 16-way [+20%] | 30MB 16-way | 2x 32MB 16-way (64MB) | 2x 32MB 16-way (64MB) | L3 is 20% larger | |

| Microcode (Firmware) | 0B0671-10B [B0 stepping] | 090672-1E [C0 stepping] | A20F10-1003 | 8F7100-1009 | Revisions just keep on coming. | |

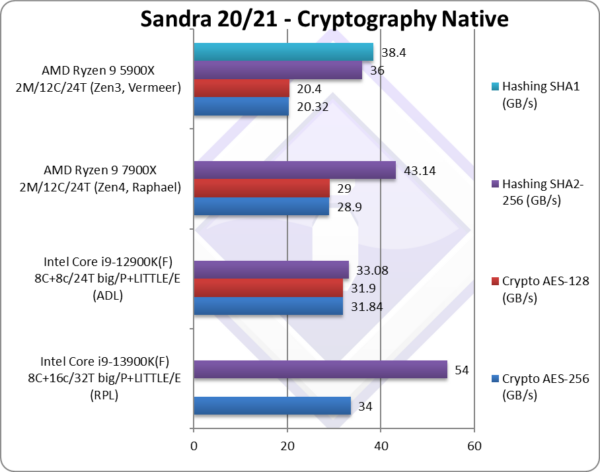

| Special Instruction Sets | VNNI/256, SHA, VAES/256 | VNNI/256, SHA, VAES/256 | AVX512, VNNI/512, SHA, VAES/512 | AVX2/FMA, SHA | AVX512 still MIA | |

| SIMD Width / Units |

256-bit | 256-bit | 512-bit (as 2x 256-bit) | 256-bit | Same SIMD units | |

| Price / RRP (USD) |

$599 | $589 | $549 | $549 | Price is a little higher? | |

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. Intel, etc.). All trademarks acknowledged and used for identification only under fair use.

The review contains only public information and not provided under NDA nor embargoed. At publication time, the products have not been directly tested by SiSoftware but submitted to the public Benchmark Ranker; thus the accuracy of the benchmark scores cannot be verified, however, they appear consistent and pass current validation checks.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!

SiSoftware Official Ranker Scores

- 13th Gen Intel Core i5-13600K (6C + 8c / 20T)

- 13th Gen Intel Core i7-13700K (8C + 8c / 24T)

- 13th Gen Intel Core i5-13400 (6C + 4c / 16T)

- 13th Gen Intel Core i9-13900KF (8C + 16c / 32T)

- 13th Gen Intel Core i9-13900K (8C + 16c / 32T)

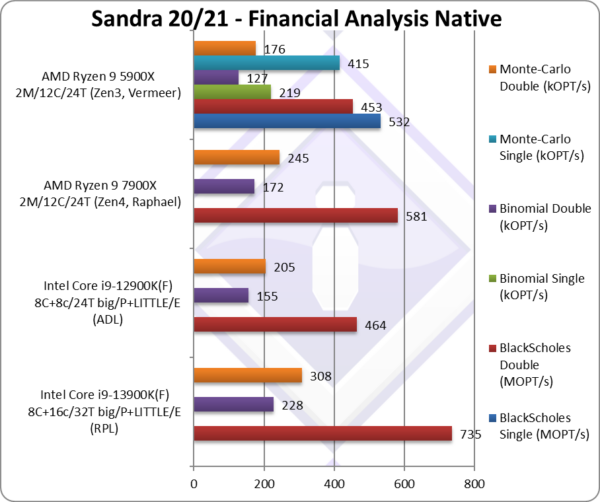

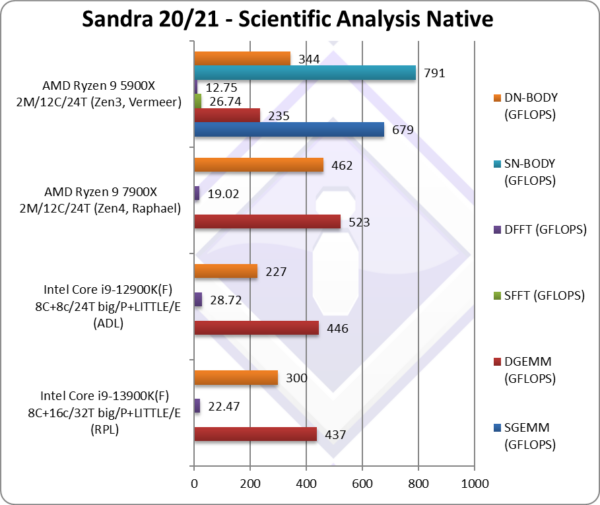

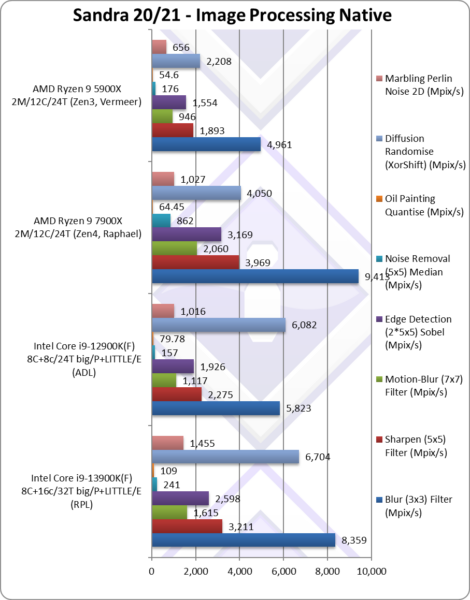

Native Performance

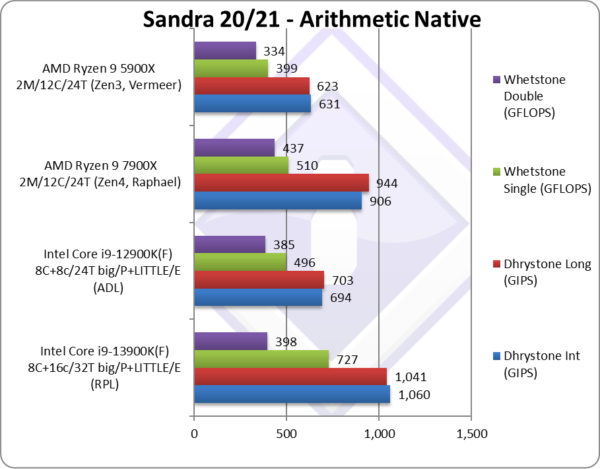

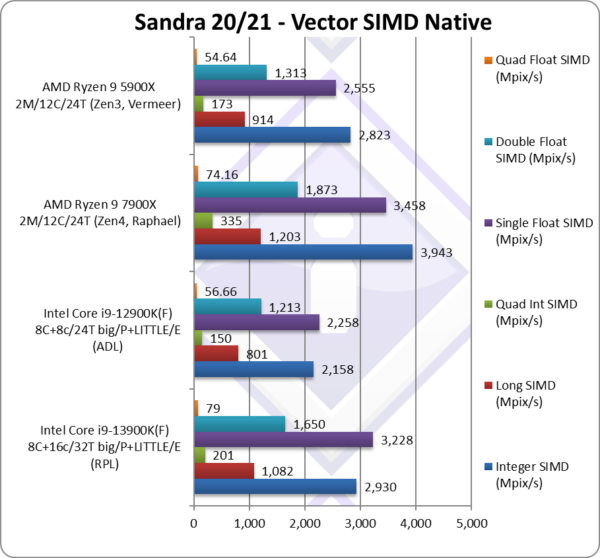

We are testing native arithmetic, SIMD and cryptography performance using the highest performing instruction sets. “RaptorLake” (RPL) does not support AVX512 – but it does support 256-bit versions of some original AVX512 extensions.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 11 x64 (22H2), latest AMD and Intel drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations.

Final Thoughts / Conclusions

Summary: Much faster and battling for the top (Intel i9 13900K(F)): 8.5/10

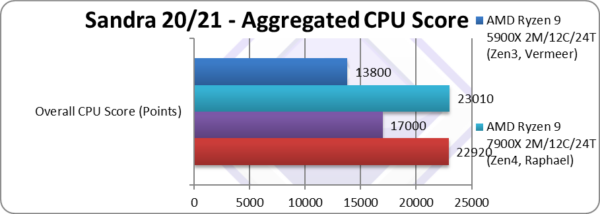

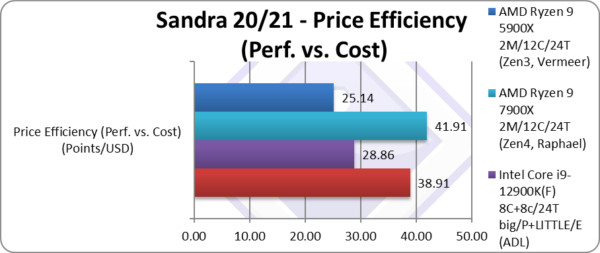

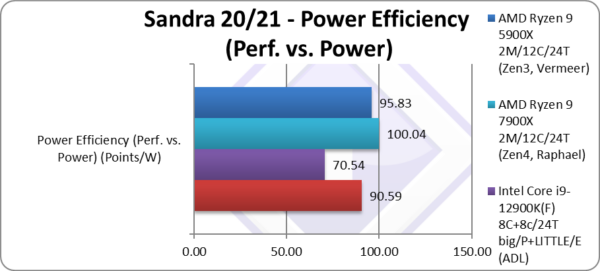

Like every revision (ex-“tock”) Intel arch(itecture), 13th Gen(eration) “RaptorLake” (RPL) is an improved 12th Gen “AlderLake” (ADL), that ends up much faster and thus more efficient (both price-wise and even power-wise), thus returns Intel to competitiveness against AMD’s latest Zen4 – battling for the top spot.

Intel has managed to increase the clocks of the big Cores and almost double L2 cache (2MB/core vs. 1.25MB/core); it has also managed to double (16c vs. 8c) the number of Little Atom cores – as well as double their cluster L2 cache (4MB vs. 2MB). This means the 13900K(F) has twice (24C/c) the number of real cores than AMD’s 7900X (12C) and the same number of threads as AMD’s 7950X (32T).

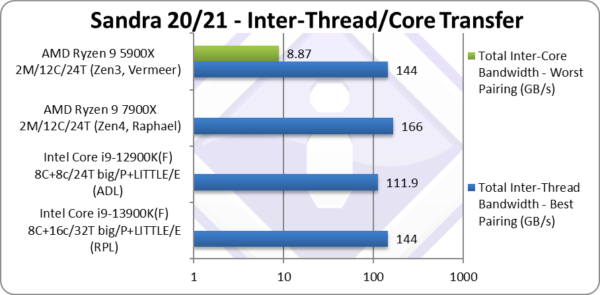

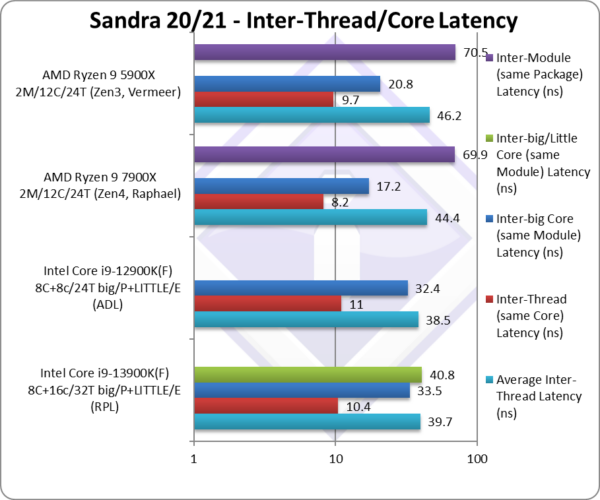

Across all benchmarks – we see RPL (13900K(F)) 35% faster than ADL (12900K(F)) – not bad from an evolution architecture update! RPL also benefits from more mature software (e.g. Windows 11 22H2) and microcode/BIOS. However, power usage has gone up as well – though this seems to be happening to the competition too (AMD Zen4).

It is disappointing that Intel was not able to enable AVX512 on RPL – but that was very unlikely as the Atom cores are unchanged from ADL. AMD has shown with Zen4 (and even VIA/Centaur) that you don’t need a full-width 512-bit implementation to benefit from AVX512 – and Intel should consider it for future Atom cores. Sandra’s benchmark results gain significant uplift from AVX512 and here RPL is at a distinct disadvantage versus Zen4.

Talking power efficiency, to aggressively save power – you could use the large number of Little Atom cores (16!) to handle just about any workload – and keep the big Cores (8) parked. Effectively, unless gaming/benchmarking/etc. – you’d just be using an ultra efficient many threaded Atom system – but still be able to crank it up when needed.

If you already upgraded to Socket 1700 for the new technologies (DDR5, PCIe 5.0, Thunderbolt/USB 4.o, etc.) and want more, then RPL is a nice upgrade. As the next Intel core arch “MeteorLake” MTL will use a different socket, RPL does not have a great upgrading potential and may be an idea to wait for discounts from Intel or AMD before making a choice…

Summary: Much faster and battling for the top (Intel i9 13900K(F)): 8.5/10

Further Articles

Please see our other articles on:

- CPU

- Intel 13th Gen Core RaptorLake? AlderLake? (i5 13400) PreView & Benchmarks – Value Hybrid Efficiency

- Intel 12th Gen Core AlderLake Mobile (i7-12700H) Review & Benchmarks – big/LITTLE Performance

- big/Performance Core Performance Analysis – Intel 12th Gen Core AlderLake (i9-12900K)

- Intel 11th Gen Core RocketLake (i7-11700K) Review & Benchmarks – CPU AVX512 Performance

- Cache & Memory

- GP-GPU

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. Intel, etc.). All trademarks acknowledged and used for identification only under fair use.

The review contains only public information and not provided under NDA nor embargoed. At publication time, the products have not been directly tested by SiSoftware but submitted to the public Benchmark Ranker; thus the accuracy of the benchmark scores cannot be verified, however, they appear consistent and pass current validation checks.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!