What is “AlderLake”?

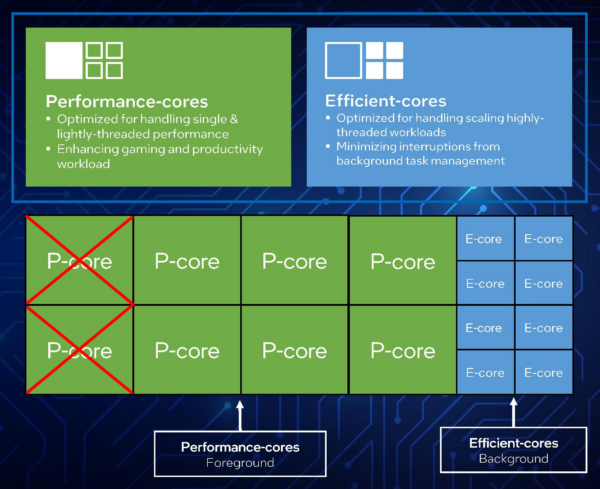

It is the “next-generation” (12th) Core architecture – replacing the short-lived “RocketLake” (RKL) that finally replaced the many, many “Skylake” (SKL) derivative architectures (6th-10th). It is the 1st mainstream “hybrid” arch – i.e. combining big/P(erformant) “Core” cores with LITTLE/E(fficient) “Atom” cores in a single package. While in the ARM world such SoC designs are quite common, this is quite new for x86 – thus operating systems (Windows) and applications may need to be updated.

Unlike the “limited edition” ULV-only 1st gen hybrid “LakeField” (LKF) arch (1C + 4c 6T and thus very low compute power) – ADL launches on desktop, mobile and ultra-mobile (ULV) platforms – all with different counts of big/P and LITTLE/E cores. For example (data as per AnandTech: Intel 12th Gen Core Alder Lake for Desktops: Top SKUs Only, Coming November 4th):

- Desktop (S) (65-125W rated, up to 250W turbo)

- 8C (aka big/P) + 8c (aka LITTLE/E) / 24T total (12th Gen Core i9-12900K(F))

- 8C + 4c / 20T total (12th Gen Core i7-12700K(F))

- 6C + 4c / 16T total (12th Gen Core i5-12600K(F))

- 6C only / 12T total (12th Gen Core i5-12600)

- High-Performance Mobile (H/HX) (45-55W rated, up to 157W turbo)

- 8C + 8c / 24T total

- Mobile (P) (20-28W rated, up to 64W turbo)

- 6C + 8c / 20T total

- Ultra-Mobile/ULV (U) (9-15W rated, up to 29W turbo)

- 2C + 8c / 12T total

For best performance and efficiency, this does require operating system scheduler changes – in order for threads to be assigned on the appropriate physical core/thread. For compute-heavy/low-latency this means a “big/P” core; for low compute/power-limited this means a “LITTLE/E” core.

In the Windows world, this means “Windows 11” for clients and “Windows Server vNext” (note not the recently released Server 2022 based on 21H2 Windows 10 kernel) for servers. The Windows power plans (e.g. “Balanced“, “High Performance“, etc.) contain additional settings (hidden), e.g. prefer (or require) scheduling on big/P or LITTLE/E cores and so on. But in general, the scheduler is supposed to automatically handle it all based on telemetry from the CPU.

Windows 11 also gets updated QoS (Quality of Service) API (aka functions) allowing app(lications) like Sandra to indicate which threads should use big/P cores and which LITTLE/E cores. Naturally, these means updated applications will be needed for best power efficiency.

AlderLake Mobile H 6C+8c

General SoC Details

- 10nm++ (Intel 7) improved process

- Unified 30MB L3 cache (almost 2x 16MB of RKL)

- PCIe 5.0 (up to 64GB/s with x16 lanes) – up to x16 lanes PCIe5 + x4 lanes PCIe4

- NVMe SSDs may thus be limited to PCIe4 or bifurcate main x16 lanes with GPU to PCIe5 x8 + x8

- PCH up to x12 lanes PCIe4 + x16 lanes PCIe3

- CPU to PCH DMI 4 x8 link (aka PCIe4 x8)

- DDR5/LP-DDR5 memory controller support (e.g. 4x 32-bit channels) – up to 4800Mt/s (official)

- New XMP 3.0 (eXtreme Memory Profile(s)) specification for overclocking with 3 profiles and 2 user-writable profiles (!)

- Thunderbolt 4 (and thus USB 4)

big/P(erformance) “Core” core

- Up to 8C/16T “Golden Cove” cores 7nm – improved from “Willow Cove” in TGL – claimed +19% IPC uplift

- No AVX512! in order to match Atom cores (on consumer)

- (Server versions should support AVX512 and new extensions like AMX and FP16 data-format)

- SMT support still included, 2 threads/core – thus 16 total

- 6-wide decode (from 4-way until now) + many other front-end upgrades

- L1I remains at 32kB but iTLB increased 2x (256 vs. 128)

- L1D remains at 48kB but dTLB increased 50% (96 vs. 64)

- L2 increased to 1.25MB (over 2x TGL of 512kB) – server versions 2MB

- See big/Performance Core Performance Analysis – Intel 12th Gen Core AlderLake (i9-12900K) article for more information

LITTLE/E(fficient) “Atom” core

- Up to 8c/8T “Gracemont” cores 7nm – improved from “Tremont” – claimed “Skylake” (SKL) Core performance (!)

- No SMT support, only 1 thread/core – thus 8 total (in 2 modules of 4 threads)

- AVX2 support – first for Atom core, but no AVX512!

- (Recall that “Phi” GP-GPU accelerator w/AVX512 was based on Atom core)

- L1I at 64kB (2x increase) same latency

- L1D still at 32kB

- L2 2MB (shared by 4 cores) aka 512kB/core [not 1MB/core as expected]

The big news – beside the hybrid arch – is that AVX512 supported by desktop/mobile “Ice Lake” (ICL), “Tiger Lake” (TGL) and “Rocket Lake” (RKL) – is no longer enabled on “Alder Lake” big/P cores in order to match the Atom LITTLE/E cores. Future HEDT/server versions with presumably only big/P cores should support it just like Ice Lake-X (ICL-X).

Note: It seems that AVX512 can be enabled on big/P Cores (at least for now) on some mainboards that provide such a setting; naturally LITTLE/E Atom cores need to be disabled. We plan to test this ASAP.

In order to somewhat compensate – there are now AVX2 versions of AVX512 extensions:

- VNNI/256 – (Vector Neural Network Instructions, dlBoost FP16/INT8) e.g. convolution

- VAES/256 – (Vector AES) accelerating block-crypto

- SHA HWA accelerating hashing (SHA1, SHA2-256 only)

While for Atom cores AVX2 support is a huge upgrade – that will make new Atom designs very much performant (not just power efficient), losing AVX512 for Core is a big loss – especially for compute-heavy software that have been updated in order to take advantage of the new instruction set. While server versions still support AVX512 (including new extensions), it is debatable how much developers will bother now unless targeting that specific niche market (heavy compute on servers).

We saw in the “RocketLake” review (Intel 11th Gen Core RocketLake AVX512 Performance Improvement vs AVX2/FMA3) that AVX512 makes RKL almost 40% faster vs. AVX2/FMA3 – and despite its high power consumption – it made RKL competitive. Without it – RKL with 2 less cores than “Comet Lake” (CML) would have not sufficiently improved to be worth it.

At SiSoftware – with Sandra – we naturally adopted and supported AVX512 from the start (with SKL-X) and added support for various new extensions as they were added in subsequent cores – this is even more disappointing; while it is not a problem to add AVX2 versions (e.g. VAES, VNNI) the performance cannot be expected to match the AVX512 original versions.

Let’s note that originally AVX512 launched with the Atom-core powered “Phi” GP-GPU accelerators – thus it would not have been impossible for Intel to add support to the new Atom core – and perhaps we shall see that in future arch… when an additional compute performance uplift will be required (i.e. deal with AMD competition).

The move to DDR5 (and LP-DDR5X) is significant, providing finer granularity (32-bit channels not 64) – allowing single DIMM multi-channel operation as well as much higher bandwidth (although latencies will naturally increase). With an even larger number of cores (up to 16 now) – dual channel DDR4 is just insufficient to feed all these cores. But at launch, it may be cripplingly expensive.

Changes in Sandra to support Hybrid

Like Windows (and other operating systems), we have had to make extensive changes to both detection, thread scheduling and benchmarks to support hybrid/big-LITTLE. Thankfully, this means we are not dependent on Windows support – you can confidently test AlderLake on older operating systems (e.g. Windows 10 or earlier – or Server 2022/2019/2016 or earlier) – although it is probably best to run the very latest operating systems for best overall (outside benchmarking) computing experience.

- Detection Changes

- Detect big/P and LITTLE/E cores

- Detect correct number of cores (and type), modules and threads per core -> topology

- Detect correct cache sizes (L1D, L1I, L2) depending on core

- Detect multipliers depending on core

- Scheduling Changes

- “All Threads (MT/MC)” (thus all cores + all threads – e.g. 24T

- “All Cores (MC aka big+LITTLE) Only” (both core types, no threads) – thus 16T

- “All Threads big/P Cores Only” (only “Core” cores + their threads) – thus 16T

- “big/P Cores Only” (only “Core” cores) – thus 8T

- “LITTLE/E Cores Only” (only “Atom” cores) – thus 8T

- “Single Thread big/P Core Only” (thus single “Core” core) – thus 1T

- “Single Thread LITTLE/E Core Only” (thus single “Atom” core) – thus 1T

- “All Threads (MT/MC)” (thus all cores + all threads – e.g. 24T

- Benchmarking Changes

- Dynamic/Asymmetric workload allocator – based on each thread’s compute power

- Note some tests/algorithms are not well-suited for this (here P threads will finish and wait for E threads – thus effectively having only E threads). Different ways to test algorithm(s) will be needed.

- Dynamic/Asymmetric buffer sizes – based on each thread’s L1D caches

- Memory/Cache buffer testing using different block/buffer sizes for P/E threads

- Algorithms (e.g. GEMM) using different block sizes for P/E threads

- Best performance core/thread default selection – based on test type

- Some tests/algorithms run best just using cores only (SMT threads would just add overhead)

- Some tests/algorithms (streaming) run best just using big/P cores only (E cores just too slow and waste memory bandwidth)

- Some tests/algorithms sharing data run best on same type of cores only (either big/P or LITTLE/E) (sharing between different types of cores incurs higher latencies and lower bandwidth)

- Reporting the Compute Power Contribution of each thread

- Thus the big/P and LITTLE/E cores contribution for each algorithm can be presented. In effect, this allows better optimisation of algorithms tested, e.g. detecting when either big/P or LITTLE/E cores are not efficiently used (e.g. overloaded)

- Dynamic/Asymmetric workload allocator – based on each thread’s compute power

As per above you can be forgiven that some developers may just restrict their software to use big/Performance threads only and just ignore the LITTLE/Efficient threads at all – at least when using compute heavy algorithms.

For this reason we recommend using the very latest version of Sandra and keep up with updated versions that likely fix bugs, improve performance and stability.

CPU (Core) Performance Benchmarking

In this article we test CPU core performance; please see our other articles on:

- CPU

- Intel 13th Gen Core RaptorLake (i9-13900) Preview & Benchmarks – Hybrid Performance

- Intel 12th Gen Core AlderLake (i9-12900K) Review & Benchmarks – Hybrid Performance

- big/Performance Core Performance Analysis – Intel 12th Gen Core AlderLake (i9-12900K)

- Intel 11th Gen Core RocketLake (i7-11700K) Review & Benchmarks – CPU AVX512 Performance

- Cache & Memory

- GP-GPU

Hardware Specifications

We are comparing the mythical top-of-the-range Gen 12 Intel with competing architectures as well as competitors (AMD) with a view to upgrading to a top-of-the-range, high performance design.

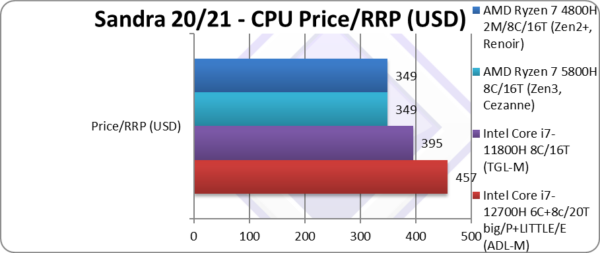

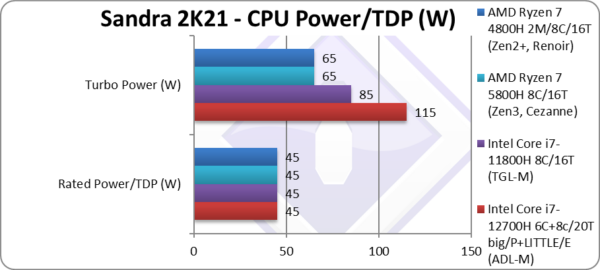

| Specifications | Intel Core i7 12700H 6C+8c/20T (ADL-M) | Intel Core i7 11800H 8C/16T (TGL-M) | AMD Ryzen 7 5800H 8C/16T | AMD Ryzen 7 4800H 2M/8C/16T | Comments | |

| Arch(itecture) | Golden Cove + Gracemont / AlderLake | Cypress Cove / TigerLake 10nm | Zen3 / Cezanne 7nm | Zen2+ / Renoir 7nm | The very latest arch | |

| Cores (CU) / Threads (SP) | 6C+8c / 20T | 8C / 16T | 8C / 16T | 2M / 8C / 16T | 2 less Big cores but more overall | |

| Rated Speed (GHz) | 3.2 big / 2.4 LITTLE | 2.3 | 3.2 | 2.9 | Base clock is higher | |

| All/Single Turbo Speed (GHz) |

4.7 big / 3.5 LITTLE | 4.6 | 4.4 | 3.2 | Turbo is similar | |

| Rated/Turbo Power (W) |

45-115 | 45-85 | 45-65 | 45-65 | TDP is 35% higher. | |

| L1D / L1I Caches | 6x 48kB/32kB + 4x 64kB/32kB |

8x 48kB/32kB |

8x 32kB/32kB | 8x 32kB/32kB | L1D is 50% larger. | |

| L2 Caches | 6x 1.25MB + 2x 2MB (11.5MB) |

8x 1.25MB (10MB) | 8x 512kB (4MB) | 8x 512kB (4MB) | L2 is about the same | |

| L3 Cache(s) | 24MB | 24MB | 16MB | 2x 4MB (8MB) | L3 is equal | |

| Microcode (Firmware) | 0906A3-414 | 0806D1-2C | A50F00-0C | 860F01-106 | Revisions just keep on coming. | |

| Special Instruction Sets |

VNNI/256, SHA, VAES/256 | AVX512, VNNI/512, SHA, VAES/512 | AVX2/FMA, SHA | AVX2/FMA, SHA | Losing AVX512 | |

| SIMD Width / Units |

2x 256-bit | 512-bit (1x FMA) |

2x 256-bit | 2x 256-bit | Less wide SIMD units | |

| Price / RRP (USD) |

$457 | $395 | $349 | $349 | Price is a little higher. | |

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. Intel, etc.). All trademarks acknowledged and used for identification only under fair use.

The review contains only public information and not provided under NDA nor embargoed. At publication time, the products have not been directly tested by SiSoftware but submitted to the public Benchmark Ranker; thus the accuracy of the benchmark scores cannot be verified, however, they appear consistent and pass current validation checks.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!

Native Performance

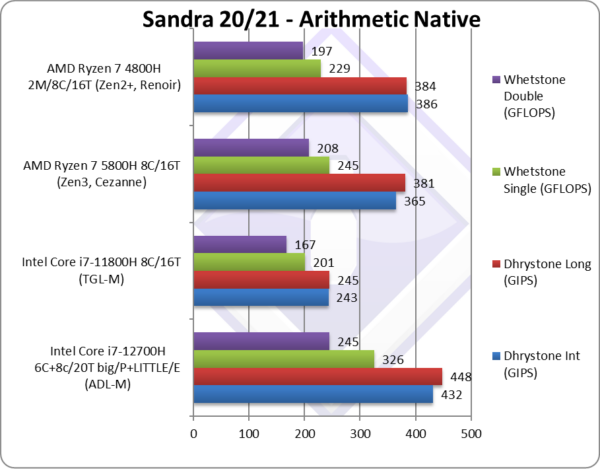

We are testing native arithmetic, SIMD and cryptography performance using the highest performing instruction sets. “AlderLake” (ADL) does not support AVX512 – but it does support 256-bit versions of some original AVX512 extensions.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 11 x64, latest AMD and Intel drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations.

| Native Benchmarks | Intel Core i7 12700H 6C+8c/20T (ADL-M) | Intel Core i7 11800H 8C/16T (TGL-M) | AMD Ryzen 7 5800H 8C/16T | AMD Ryzen 7 4800H 2M/8C/16T | Comments | |

|

||||||

|

Native Dhrystone Integer (GIPS) | 432 [+18%] | 243 | 365 | 386 | ADL beats AMD by 18% and also TGL-M |

|

Native Dhrystone Long (GIPS) | 448 [+18%] | 245 | 381 | 384 | A 64-bit integer test, same result |

|

Native FP32 (Float) Whetstone (GFLOPS) | 326 [+33%] | 201 | 245 | 229 | With floating-point, ADL 33% faster |

|

Native FP64 (Double) Whetstone (GFLOPS) | 245 [+18%] | 167 | 208 | 197 | With FP64, ADL is 18% faster than AMD |

| No wonder Intel likes legacy benchmarks – ADL-M beats both TGL-M and AMD competition by a large 18-33% margin – despite having just 6 big cores vs. 8 on old TGL-M and both AMD competition!

It seems that the firmware + BIOS + Windows 11 updates have done the trick. Note that due to being “legacy” none of the benchmarks support AVX512; while we could update them, they are not vectorise-able in the “spirit they were written” – thus single-lane AVX512 cannot run faster than AVX2/SSEx. |

||||||

|

||||||

|

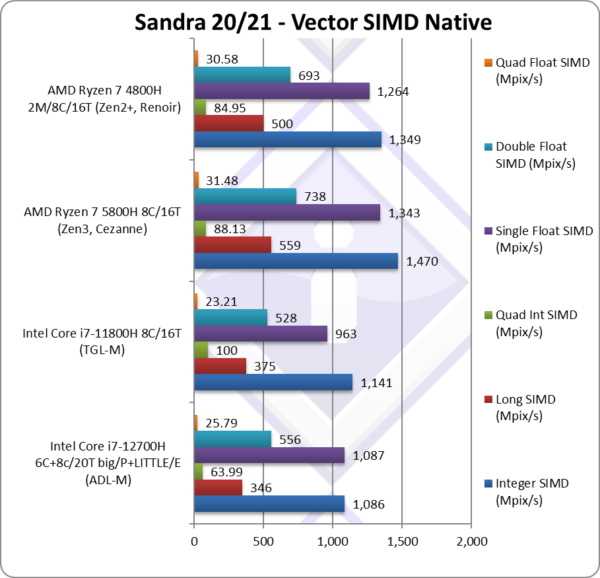

Native Integer (Int32) Multi-Media (Mpix/s) | 1,086 [-26%] | 1,141* | 1,470 | 1,349 | ADL-M is 26% slower than AMD and even TGL-M |

|

Native Long (Int64) Multi-Media (Mpix/s) | 346 [-38%] | 375* | 559 | 500 | With a 64-bit, ADL-M is 38% slower. |

|

Native Quad-Int (Int128) Multi-Media (Mpix/s) | 63.99 [-27%] | 100** | 88.13 | 84.95 | Using 128-bit integers, ADL-M is 27% slower. |

|

Native Float/FP32 Multi-Media (Mpix/s) | 1,087 [-19%] | 963* | 1,343 | 1,264 | In this floating-point test, ADL-M is 19% slower. |

|

Native Double/FP64 Multi-Media (Mpix/s) | 556 [-25%] | 528* | 738 | 639 | Switching to FP64, ADL-M is 25% slower. |

|

Native Quad-Float/FP128 Multi-Media (Mpix/s) | 25.79 [-18%] | 23.21* | 31.48 | 30.58 | Using FP128 emulation, ADL-M is 18% slower. |

| With heavily vectorised SIMD workloads – ADL-M does manage to beat TGL-M in floating-point but not integer workloads. The LITTLE/E Atom cores do help a little here but cannot match the power of big/P Cores.

Here, it seems that the lack of AVX512 does show (RIP) – it is 18-38% slower than AMD competition; you can just see how the AVX512-IFMA52 on TGL-M just leaves everything into dust. Intel will need more cores if they want to beat AMD. Note:* using AVX512 instead of AVX2/FMA. Note:** using AVX512-IFMA52 to emulate 128-bit integer operations (int128). |

||||||

|

||||||

|

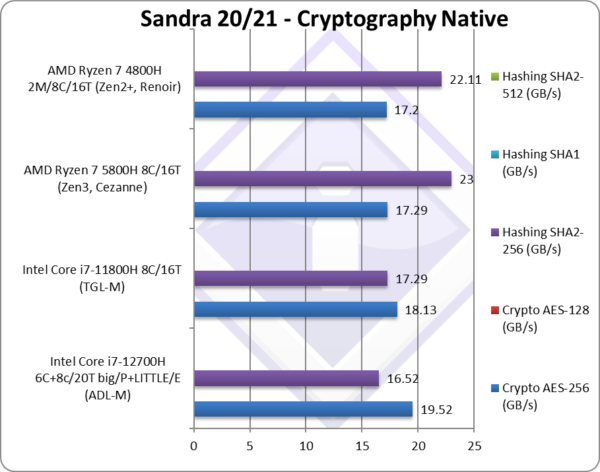

Crypto AES-256 (GB/s) | 19.52* [+13%] | 18.13* | 17.29 | 17.2 | ADL-M has 13% more bandwidth |

|

Crypto SHA2-256 (GB/s) | 16.52 [-28%] | 17.29*** | 23** | 22.11** | AMD rules here with SHA HWA. |

| The memory sub-system is crucial here, and these (streaming) tests show that using SMT threads is just not worth it; similarly using the LITTLE/E Atom cores is also perhaps also not worth it – with the memory bandwidth best used by the big/E Cores only. In effect out of 20T of ADL-M just 6T (one per big/P Core) are sufficient.

With compute hashing, SHA HWA does help – and AMD shows its power, the 8 big cores just leave ADL-M in the dust. Again, the loss of AVX512 is felt here – TGL-M has higher hashing bandwidth even with less thereads. Note:* using VAES (AVX512-VL or AVX2-VL) instead of AES HWA. Note:** using SHA HWA instead of multi-buffer AVX2. [note multi-buffer AVX2 is slower than SHA hardware-acceleration] Note:*** using AVX512 B/W [note multi-buffer AVX512 is faster than using SHA hardware-acceleration] |

||||||

|

||||||

|

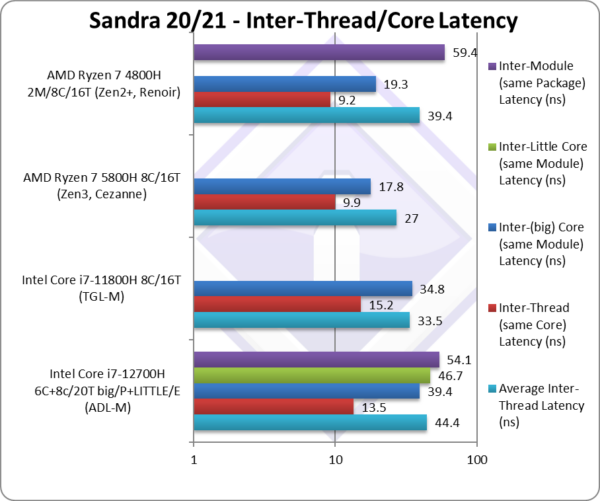

Average Inter-Thread Latency (ns) | 44.4 [+33%] | 33.5 | 27 | 39.4 | ADL-M average is 33% power |

|

Inter-Thread Latency (Same Core) Latency (ns) | 13.5 [-11%] | 15.2 | 9.9 | 9.2 | ADL-M latency is 11% lower |

|

Inter-Core Latency (big Core, same Module) Latency (ns) | 39.4 [+13%] | 34.8 | 17.8 | 19.3 | Big-Core latencies are 13% higher. |

|

Inter-Core (Little Core, same Module) Latency (ns) | 46.7 | – | – | – | Little-Core latencies are higher. |

|

Inter-Module Latency (Same Package) Latency (ns) | 54.1 | – | – | 59.4 | Inter-different core latencies are similar to CCX latencies. |

| ADL’s latencies (inter-thread, inter-big-core) are similar to TGL, but inter-LITTLE-core and big-2-LITTLE-core latencies are much higher, similar to AMD’s inter-module (CCX) latencies.

Thus, the average latency for all thread-combinations is 33% higher than TGL-M and, again, similar to AMD’s older multiple-CCX CPU. As with AMD, threads running on different type of cores should avoid sharing lots of data as this will incur much higher latencies. |

||||||

|

||||||

|

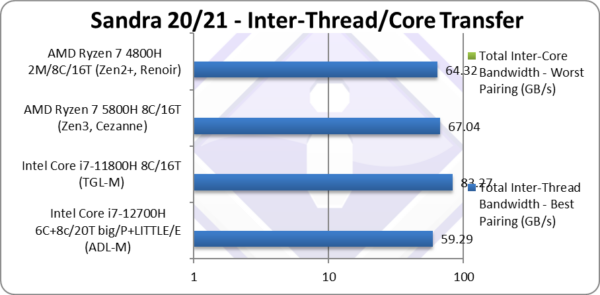

Total Inter-Thread Bandwidth – Best Pairing (GB/s) | 59.29 [-29%] | 83.27 | 67.04 | 64.32 | ADL-M bandwidth is 29% lower. |

| As with inter-thread latency, while ADL’s threads on big/P Cores share L1D (which comes useful for small data transfers), ADL’s threads on LITTLE/E Atom cores only have the shared L2 cache which has lower bandwidth.

Thus while ADL’s 8 LITTLE/E Atom cores do help ADL have 30% more bandwidth than RKL, naturally they cannot help it beat AMD’s Zen3 which 12 “big” cores. Due to the shared L3 caches, none of the Intel designs “crater” when the pairing is worst (aka across Module/CCX) with bandwidth falling to 1/3 (a third) while Zen3’s bandwidth falls to 1/16 (a sixteenth)! Again, sharing data between different core types is not as problematic as between modules in Zen3 where threads sharing data have to be carefully affinitized. Note:* using AVX512 512-bit wide transfers. |

||||||

|

||||||

|

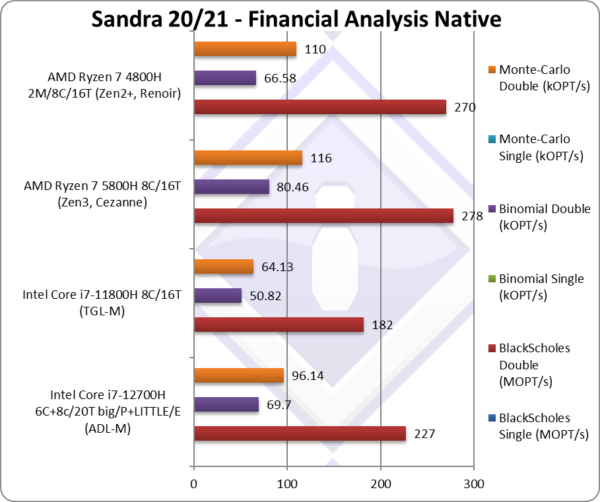

Black-Scholes double/FP64 (MOPT/s) | 227 [-18%] | 182 | 278 | 270 | Using FP64 ADL-M is 18% slower |

|

Binomial double/FP64 (kOPT/s) | 69.7 [-13%] | 50.82 | 80.46 | 66.58 | With FP64 code ADL-M is 13% slower. |

|

Monte-Carlo double/FP64 (kOPT/s) | 96.14 [-13%] | 64.13 | 116 | 110 | Switching to FP64 ADL-M is again 13% slower |

| With non-SIMD financial workloads, similar to what we’ve seen in legacy floating-point code (Whetstone), ADL-M does perform much better than TGL-M with its 8 big cores – but not enough to beat either of the two AMD competitors.

Perhaps such code is better offloaded to GP-GPUs these days, but still lots of financial software do not use GP-GPUs even today. |

||||||

|

||||||

|

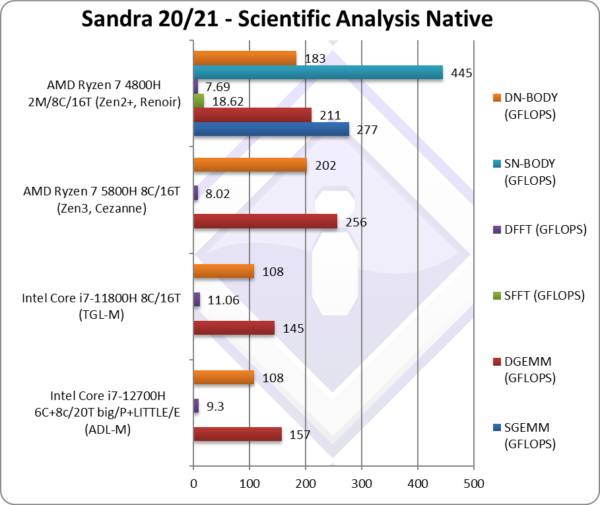

DGEMM (GFLOPS) double/FP64 | 157 [-39%] | 145* | 256 | 211 | With FP64 vectorised code, ADL-M is 39% slower |

|

DFFT (GFLOPS) double/FP64 | 9.3 [+16%] | 11* | 8.02 | 7.69 | With FP64 code, ADL-M is 16% faster! |

|

DN-BODY (GFLOPS) double/FP64 | 108 [1/2x] | 108* | 202 | 183 | With FP64 ADL-M is 1/2 AMD speed |

| With highly vectorised SIMD code (scientific workloads), after the various updates ADL-M starts to perform very well but the loss of AVX512 is still felt – going against TGL-M is not the same as going against RKL as on the desktop.

With just AVX2/FMA3 – going against AMD competition with more big cores (8 vs. 6) ADL-M cannot beat them and we’re in the strange situation that AMD reigns in SIMD benchmarks… * using AVX512 instead of AVX2/FMA3 |

||||||

|

||||||

|

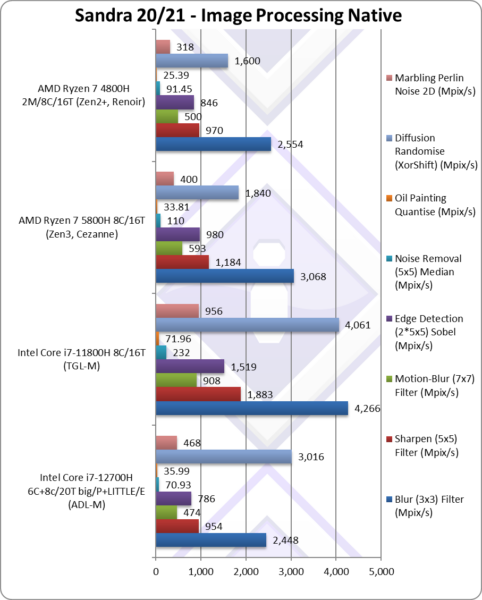

Blur (3×3) Filter (MPix/s) | 2,448 [-20%] | 4,266* | 3,068 | 2,554 | In this vectorised workload ADL-M is 20% slower. |

|

Sharpen (5×5) Filter (MPix/s) | 954 [-19%] | 1,883* | 1,184 | 970 | Same algorithm but more shared data ADL-M is 19% slower than AMD. |

|

Motion-Blur (7×7) Filter (MPix/s) | 474 [-20%] | 908* | 593 | 500 | Again same algorithm but even more data shared. |

|

Edge Detection (2*5×5) Sobel Filter (MPix/s) | 786 [-20%] | 1,519* | 980 | 846 | Different algorithm but still vectorised ADL-M is 20% slower. |

|

Noise Removal (5×5) Median Filter (MPix/s) | 70.93 [-36%] | 232* | 110 | 91.45 | Still vectorised code ADL-M is 36% slower. |

|

Oil Painting Quantise Filter (MPix/s) | 35.99 [+6%] | 71.96* | 33.81 | 25.39 | ADL is 6% faster than AMD |

|

Diffusion Randomise (XorShift) Filter (MPix/s) | 3,016 [+64%] | 4,061* | 1,840 | 1,600 | With integer workload, ADL-M is 65% faster than AMD. |

|

Marbling Perlin Noise 2D Filter (MPix/s) | 468 [+17%] | 956* | 400 | 318 | In this final test ADL-M is 17% faster than AMD. |

| This benchmarks *love* AVX512 – and here TGL-M has no problem dispatching ADL-M with its multiple cores – showing just how much (despite everything, e.g. power consumption) AVX512 has helped. It is a sad, sad, loss.

As a result ADL-M is about 20-35% slower than AMD competition in some tests, 6-17% faster in other tests – overall it does not manage to beat the latest Ryzen Mobile. * using AVX512 instead of AVX2/FMA |

||||||

|

||||||

|

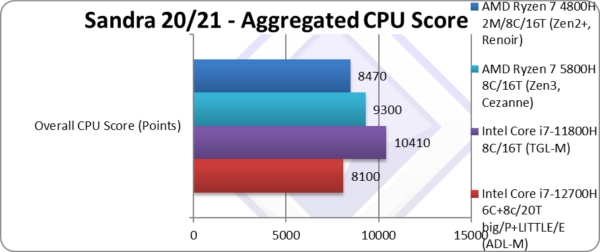

Aggregate Score (Points) | 8,100 [-13%] | 10,410* | 9,300 | 8,470 | Across all benchmarks, ADL-M is 13% slower than AMD. |

| Perhaps surprising despite early tests, ADL-M cannot beat either TGL-M (AVX512 enabled) nor either AMD Ryzen Mobile competition. Despite the increased number of cores (big+LITTLE) – it just is not enough! Again, losing AVX512 is a sad, sad, loss.

Note*: using AVX512 not AVX2/FMA3. |

||||||

|

||||||

|

Price/RRP (USD) | $457 [+16%] | $395 | $349 | $349 | Price has gone up a bit by 16%. |

|

||||||

|

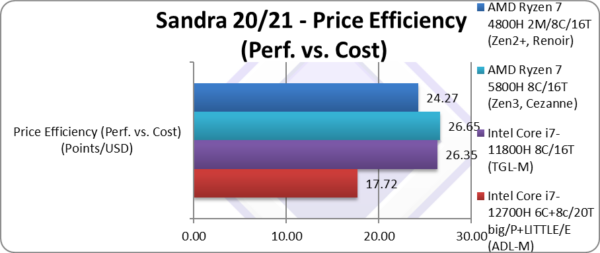

Price Efficiency (Perf. vs. Cost) (Points/USD) | 17.72 [-33%] | 26.35 | 26.65 | 24.27 | ADL-M is 33% less value than AMD. |

| With the lower overall performance and slightly higher price, ADL-M ends up 33% less value-per-buck than both TGL-M as well as AMD competition. What is interesting is that TGL-M was doing quite well against AMD while we see ADL-M being the odd-one out.

Naturally, these are just SoC tray prices, not the overall laptop price which depends on other components, branding, etc. – but in general AMD laptops do tend to be cheaper which is just adding to Intel’s woes. |

||||||

|

||||||

|

Power/TDP (W) | 45-115 [+35%] | 45-86 | 45-65 | 45-65 | Turbo has really gone up by 35% |

|

||||||

|

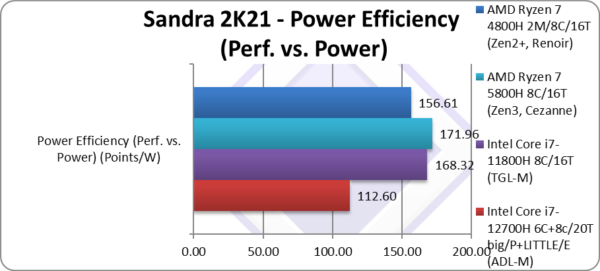

Power Efficiency (Perf. vs. Power) (W) | 112 [-35%] | 168 | 171 | 156 | ADL-M ends up 35% less efficient. |

| If we go by Base & Turbo power, ADL-M ends up 35% less power-efficient than AMD and also TGL-M; while the base/TDP remains the same for all CPUs, turbo has really gone up – that combined with the lower overall score ends up with ADL-M becoming less efficient.

Thus – if you were to always use it at full utilisation/power, TGL-M (and AMD) seems more efficient; but for idle periods (e.g. waiting for I/O for storage, networking, etc.) ADL-M should end up more efficient. It all depends on the workloads. |

||||||

SiSoftware Official Ranker Scores

Final Thoughts / Conclusions

Summary: Good efficiency, sad AVX512 loss, best to wait: 7/10

ADL-M has been designed for higher efficiency – but here in the mobile platform – it goes against TGL/ICL-M nor the power hungry RKL as on desktop. It also goes against low-power AMD Ryzen competition (Zen2+, Zen3) that are much, much faster than the original Ryzens.

TGL-M and ICL-M had a trump card: AVX512; yes, on high performance mobile/laptop systems – it ripped through tests despite higher power consumption and core-for-core (8C vs. 8C) it gave Intel the win. ADL-M does not have this advantage, plus has fewer big cores (6C vs. 8C) and the LITTLE cores cannot make up the difference.

With updated Sandra benchmarks (dynamic workload allocator), firmware/BIOS/OS (Windows 11) ADL-M is performing a lot better than what we’ve initially seen. It is likely its performance will improve in the future as both its firmware/BIOS and OS/applications are optimised further.

- In non-SIMD (legacy) tests, ADL-M performs well against both TGL-M and AMD competiton, you can see just why Intel prefers those kinds of benchmarks. For such code, ADL-M does perform better than AMD Ryzen.

- In heavy-compute SIMD tests – that use AVX512 for TGL/ICL-M, ADL-M is left wanting and falls behind; with the same instruction set as AMD Ryzens it cannot beat them either.

- Streaming (bandwidth-bound) tests do benefit from DDR5 (or LP-DDR5) and high-performance laptops should have it; however, due to high cost – most current laptops will launch with DDR4 (or LP-DDR4) which negates any improvement.

- Different core type (aka between big/P and LITTLE/E cores) transfer latencies are higher than same-core (aka between P-cores) threads sharing memory should stay on the same type of cores.

ADL-M does bring some more new technologies: PCIe 5.0 for dedicated GP-GPUs (which high-performance laptops are likely to use), NVMe SSDs, etc. – as well as Thunderbolt 4 for high-speed external devices like eGPU, NVMe, 10Gbe+ networking, etc. If you need faster connections and have the money for such high-speed devices – then you will be happy.

The LITTLE cores in ADL-M do come in useful for low-compute tasks, I/O (storage, networking, video decoded on the GPU, etc.), various interrupt handling – when the big cores can be parked and not woken up. This should save lots of power and thus greatly increase battery life. They should also come in useful to handle such I/O and allow the big cores to handle heavy compute tasks uninterrupted.

For this market (high-performance laptops), perhaps more big cores and less LITTLE cores should have been deployed – e.g. 8C + 4c rather than 6C + 8c. Apple’s M1 (desktop version) for example includes far more big cores and very few LITTLE cores.

In summary – despite ADL-M’s hybrid architecture being designed for mobile platforms such as these, the loss of performance against both previous TGL-M as well as AMD competition makes it somewhat difficult proposition at this time. The new technologies (DDR5, PCIe 5, TB4, etc.) are too new, few and expensive right now. If you have money to burn…

Summary: Good efficiency, sad AVX512 loss, best to wait: 7/10

Further Articles

Please see our other articles on:

- CPU

- Cache & Memory

- GP-GPU

Disclaimer

This is an independent review (critical appraisal) that has not been endorsed nor sponsored by any entity (e.g. Intel, etc.). All trademarks acknowledged and used for identification only under fair use.

The review contains only public information and not provided under NDA nor embargoed. At publication time, the products have not been directly tested by SiSoftware but submitted to the public Benchmark Ranker; thus the accuracy of the benchmark scores cannot be verified, however, they appear consistent and pass current validation checks.

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!