What are “AlderLake”, “RaptorLake”?

It is the current (12th gen) Core architecture – soon to be replaced by “RaptorLake” (RPL 13th gen) – that replaced the short-lived “RocketLake” (RKL “real” 10th gen aka ICL) that finally replaced the many, many “Skylake” (SKL) derivative architectures (6th-10th “fake” gen). It is the 1st mainstream “hybrid” arch – i.e. combining big/P(erformant) “Core” cores with LITTLE/E(fficient) “Atom” cores in a single package. While in the ARM world such SoC designs are quite common, this is quite new for x86 – thus operating systems (Windows) and applications had to be updated.

RPL is rumoured to just double LITTLE/E Atom core counts (unchanged “Gracemont“) while including slightly updated big/P Core (“Raptor Cove” vs. “Golden Cove“) that are rumoured to clock faster (at least in Turbo). Here we wonder if this is a risky step for Intel considering what the competition (AMD) has in the pipeline.

We also wonder what the next arch (14th gen, “MeteorLake” MTL) might need to bring to match or even beat the competition…

Why re-test it now?

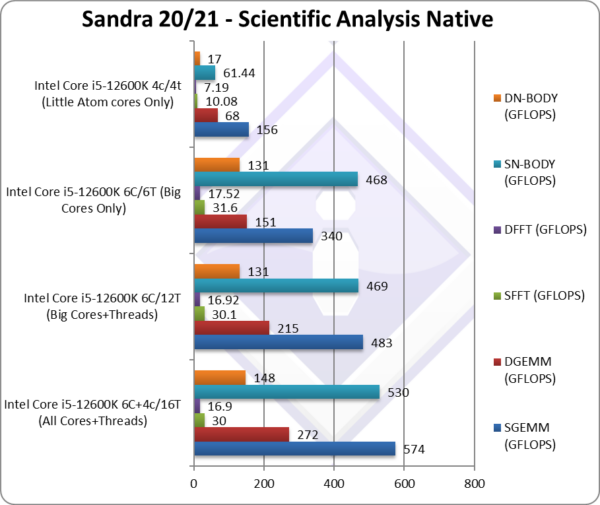

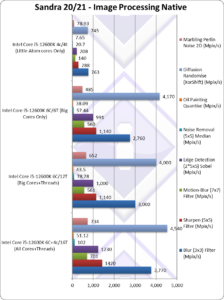

We have added additional “parallelism” (aka native scheduling bypassing Windows / Intel’s Thread Director) testing methods that allows specific big/P or LITTLE/E cores only testing (with multi-threading or not) or big-2-LITTLE cores testing – without the need of rebooting and disabling cores / SMT.

Scheduling Changes

- “All Threads (MT/MC)” (thus all cores + all threads – e.g. 6C+4c / 16T (2×6 + 4)

- “All Cores (MC aka big+LITTLE) Only” (both core types, no threads) – thus 6C+4c / 10T (6+4)

- “All Threads big/P Cores Only” (only “Core” cores + their threads) – thus 6C / 12T (2×6)

- “big/P Cores Only” (only “Core” cores) – thus 6C / 6T

- “LITTLE/E Cores Only” (only “Atom” cores) – thus 6c / 6t

- “Single Thread big/P Core Only” (thus single “Core” core) – thus 1T

- “Single Thread LITTLE/E Core Only” (thus single “Atom” core) – thus 1t

We have also added hybrid “compute contribution” that details the benchmark score apportioned to big/P & LITTLE/E clusters/cores and thus determine just now much contribution each core type makes to the overall score as well as the big-2-LITTLE compute power ratio. We can thus see how shared resources (current, power, thermal) are distributed for best overall performance.

Note that this is when all cores/threads are used together rather than tested separately – i.e. it is a completely different view to “parallelism” where different clusters / cores are tested individually and not together – when resources (current, power, thermal) are all available to the cluster/cores under test and (hopefully) not shared with unused/parked cluster/cores.

Benchmarking Changes

- Dynamic/Asymmetric workload allocator – based on each thread’s compute power

- Note some tests/algorithms are not well-suited for this (here P threads will finish and wait for E threads – thus effectively having only E threads). Different ways to test algorithm(s) will be needed.

- Dynamic/Asymmetric buffer sizes – based on each thread’s L1D caches

- Memory/Cache buffer testing using different block/buffer sizes for P/E threads

- Algorithms (e.g. GEMM) using different block sizes for P/E threads

- Best performance core/thread default selection – based on test type

- Some tests/algorithms run best just using cores only (SMT threads would just add overhead)

- Some tests/algorithms (streaming) run best just using big/P cores only (E cores just too slow and waste memory bandwidth)

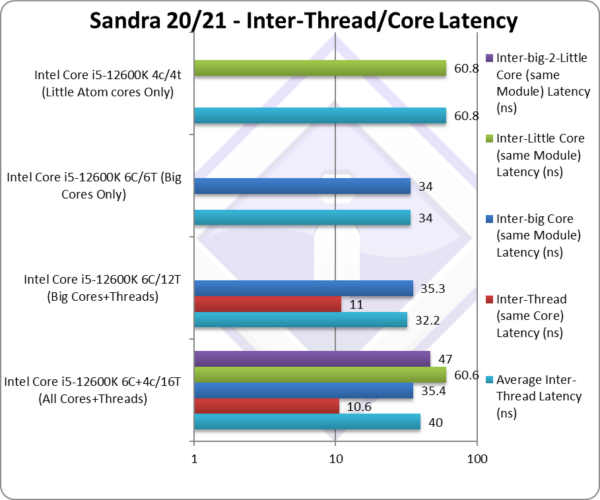

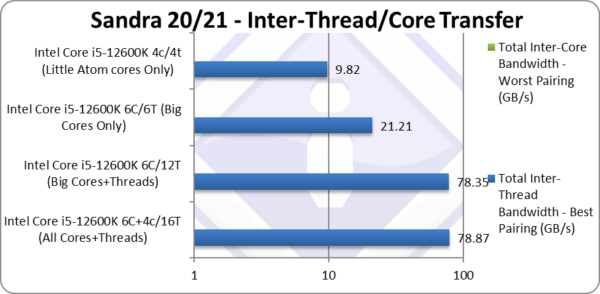

- Some tests/algorithms sharing data run best on same type of cores only (either big/P or LITTLE/E) (sharing between different types of cores incurs higher latencies and lower bandwidth)

- Reporting the Performance Contribution & Ratio of each thread

- Thus the big/P and LITTLE/E cores contribution for each algorithm can be presented. In effect, this allows better optimisation of algorithms tested, e.g. detecting when either big/P or LITTLE/E cores are not efficiently used (e.g. overloaded)

As per above you can be forgiven that some developers may just restrict their software to use big/Performance threads only and just ignore the LITTLE/Efficient threads at all – at least when using compute heavy algorithms.

For this reason we recommend using the very latest version of Sandra and keep up with updated versions that likely fix bugs, improve performance and stability.

Cluster Performance Contribution (per Cluster type)

- Multi-Media Integer Native : 80% big/Cluster – 20% LITTLE/cluster

- Multi-Media Long-int Native : 83% big/Cluster – 17% LITTLE/cluster

- Multi-Media Quad-int Native : 85% big/Cluster – 15% LITTLE/cluster

- Multi-Media Single-float Native : 85% big/Cluster – 15% LITTLE/cluster

- Multi-Media Double-float Native : 85% big/Cluster – 15% LITTLE/cluster

- Multi-Media Quad-float Native : 86% big/Cluster – 14% LITTLE/cluster

Note: Here we sum the compute performance contribution for all cores in the type of cluster. If SMT is enabled, all threads will be summed.

Note2: This feature also works on Arm64 big.LITTLE / DynamicQ SoCs, it is not tied to x86 Intel hybrid systems.

Cluster Performance Ratio (per core type)

- Multi-Media Integer Native : 3x big/Core – 1x LITTLE/core

- Multi-Media Long-int Native : 3.1x big/Core – 1x LITTLE/core

- Multi-Media Quad-int Native : 3.2x big/Core – 1x LITTLE/core

- Multi-Media Single-float Native : 3.3x big/Core – 1x LITTLE/core

- Multi-Media Double-float Native : 3.3x big/Core – 1x LITTLE/core

- Multi-Media Quad-float Native : 3.4x big/Core – 1x LITTLE/core

Note: Here we divide the cluster performance contribution by the number of cores in each cluster – and then work out the big/LITTLE ratio. If SMT is enabled or not, we still only count the number of cores per cluster, not threads.

CPU (Core) Performance Benchmarking

In this article we test CPU core performance; please see our other articles on:

- CPU

- What Intel needs is SVE-like variable width AVX (AVX-V?) to solve hybrid

- Intel 12th Gen Core AlderLake Mobile (i7-12700H) Review & Benchmarks – big/LITTLE Performance

- big/Performance Core Performance Analysis – Intel 12th Gen Core AlderLake (i9-12900K)

- Intel 11th Gen Core RocketLake (i7-11700K) Review & Benchmarks – CPU AVX512 Performance

- Cache & Memory

- GP-GPU

Native Performance

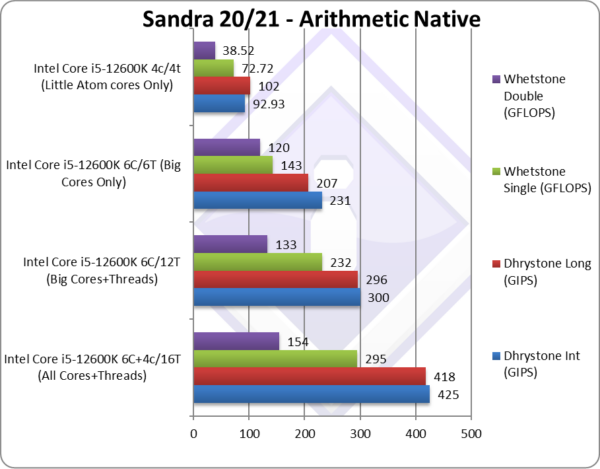

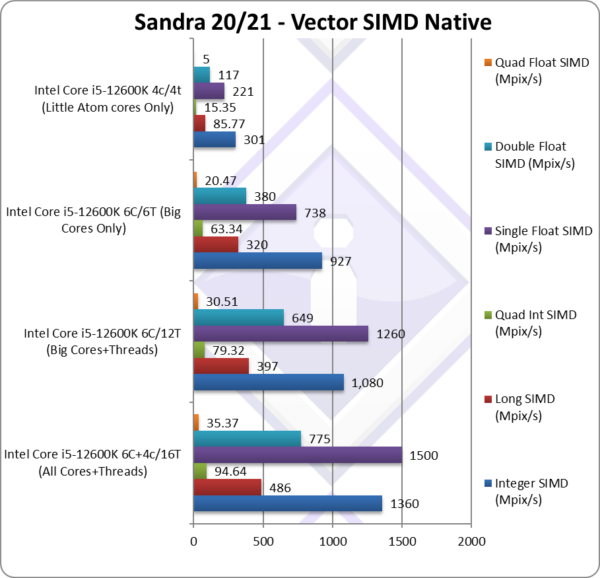

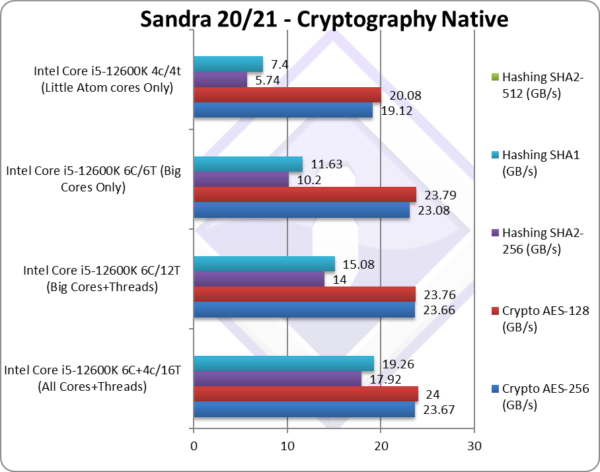

We are testing native arithmetic, SIMD and cryptography performance using the highest performing instruction sets. “AlderLake” (ADL) does not support AVX512 – but it does support 256-bit versions of some original AVX512 extensions.

Results Interpretation: Higher values (GOPS, MB/s, etc.) mean better performance.

Environment: Windows 11 x64, latest Intel drivers. 2MB “large pages” were enabled and in use. Turbo / Boost was enabled on all configurations.

SiSoftware Official Ranker Scores

- 12th Gen Intel Core i9-12900K (8C + 8c / 24T)

- 12th Gen Intel Core i9-12900KF (8C + 8c / 24T)

- 12th Gen Intel Core i7-12700K (8C + 4c / 20T)

- 12th Gen Intel Core i5-12600K (6C + 4c / 16T)

Final Thoughts / Conclusions

Summary: RaptorLake takes a big gamble with more LITTLE Atom cores

RaptorLake (RPL) will double LITTLE/E Atom cores vs. AlderLake (ADL) – but will not add additional big/P Cores: will this strategy win?

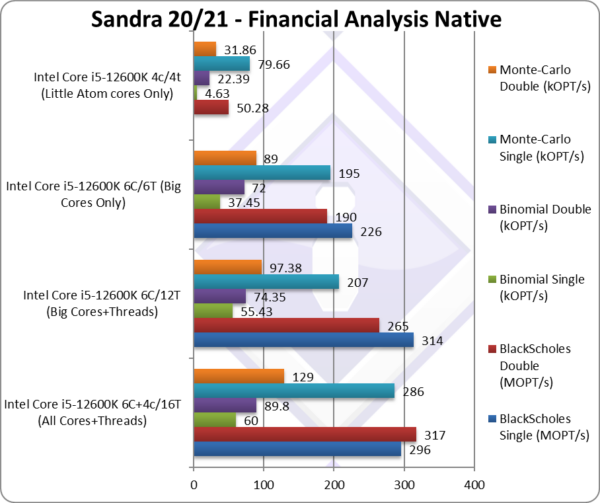

It is not really a surprise to find that the LITTLE/E Atom cores have good performance on “legacy” non-SIMD code where they are generally 1/2x the performance of the big/P Core; this means that with 4x of them (area wise) – you get 2x the performance per area – thus more LITTLE/E Atom cores will work out better in RPL.

With SIMD heavy compute workloads (AVX2/FMA) – things are more complicated, as the LITTLE/E Atom cores are only 1/3x-1/4x the performance of big/P Core – thus in general (considering the overhead of additional threads) – we would generally be better off with more big/P Cores (power notwithstanding).

An additional is that AVX512 has been disabled – just at the time when the competition (AMD) is bringing AVX512-enabled CPUs (Zen4 – Series 7000)! We’ve previously seen that RKL/ADL Cores perform 30-40% better with AVX512 code – thus making big/P Core over 5x faster than LITTLE/E Atom core. There is no question here that we’d prefer more big/P cores and not more LITTLE/E Atom cores.

We should also mention that the big/P Cores in RPL are updated (“Raptor Cove” vs. “Golden Cove“) and are rumoured to run much faster (Turbo clock) that should bring additional performance vs. the big/P Cores in ADL. Meanwhile the LITTLE/E Atom cores (“Gracemont“) have not been updated at all and seem to run at the frequency limit and thus unlikely be any faster.

It is likely that Intel is aware of this and there is nothing else they could do in the short-term (process-wise, power-wise, etc.) and adding more big/P Cores was actually worse. In non-SIMD (especially non-AVX512) workloads (Cinebench?) more Atom cores are likely to perform better and likely consume less power as well.

Thus we will have to wait for the next arch (“MeteorLake” MTL) to perhaps increase the big/P Core counts, perhaps enable AVX512 code to run by updating LITTLE/E Atom cores to support it (microcode emulation? variable-width AVX? forced scheduling to big/P Cores?) In any case, MTL may have to bring significant performance upgrades if it is to beat the AVX512-enabled competition (AMD)…

Long Summary:RPL is a risky gamble that just doubles the LITTLE/E Atom core counts while not increasing the big/P Cores counts at all. While this will improve non-SIMD code performance, it is likely to fail against AVX512 enabled competition. Still for some workloads, especially low-compute, RPL might be a decent improvement over ADL. Still, maybe it’s best to wait…

Summary: RaptorLake takes a big gamble with more LITTLE Atom cores

Further Articles

Please see our other articles on:

- CPU

- Cache & Memory

- GP-GPU

And please, don’t forget small ISVs like ourselves in these very challenging times. Please buy a copy of Sandra if you find our software useful. Your custom means everything to us!